In the world of machine learning, there are certain core techniques that form the foundation of many advanced algorithms. These techniques are key to understanding the basics of machine learning and can be applied to a wide variety of real-world problems. One of the most important techniques is image classification, which seeks to identify specific objects, features, or patterns in images. This data can provide computer systems with the knowledge they need to understand, and interact with, their environments.

One of the most well-known applications of image classifiers is in facial recognition technology. These algorithms can analyze features of a person’s face and compare them to a database of known faces to identify individuals. This technology is used in security systems, such as in airport security and border control systems, as well as in social media platforms, where it can automatically tag people in photos.

But facial recognition is just one example of the many real-world applications of image classifiers. In the medical field, these algorithms can be used to analyze medical images, such as X-rays and MRI scans, to aid in the diagnosis of diseases. And in the automotive industry, image classifiers are being used to develop self-driving cars. These algorithms can identify and classify objects on the road, such as pedestrians, bicycles, and other vehicles, and help the car navigate safely. The list of application areas goes on and on, from agriculture and environmental conservation to robotics.

If you are interested in better understanding how you can put machine learning to work for you or your organization, then a great place to start is Thomas Vikstrom’s tutorials that approach image classification from a practical perspective, and reveals how these fundamental techniques can be applied to real problems. He leverages easy-to-use hardware and the user-friendly Edge Impulse Studio machine learning development platform to demonstrate how accessible these advanced learning algorithms can be.

In a two part series, Vikstrom walks through how to build the hardware and software needed to classify playing cards, then he shows how to sort trash with a robotic arm. And as you might have guessed by this point, the underlying technology behind both of these very different applications is a nearly identical image classification algorithm. Focusing on the latter project, Vikstrom wanted to develop a proof of concept device that could help to sort trash into categories like metals, plastics, and paper for proper recycling.

For the hardware platform, the Silicon Labs xG24-DK2601B EFR32xG24 Dev Kit was selected because it offers a powerful processor, wireless connectivity, and plenty of memory to run a machine learning algorithm that has been highly optimized for edge computing platforms by Edge Impulse Studio. This was paired with a two megapixel Arducam image sensor to get a look at the trash that is to be sorted. Once recognized, trash is sorted with a desktop grade, four-axis Dobot Magician robotic arm. The hardware was assembled, then installed in a custom, 3D-printed case that also serves as a stand for the camera.

After selecting the hardware platform, the next step in building an image classifier is capturing sample images of the objects that you would like to classify. These images will be used to help the classification algorithm learn to do its job. Vikstrom linked his smartphone to an Edge Impulse Studio project, then captured images of paper, cardboard, plastic, and metal items. These images were automatically uploaded to the project. To ensure good diversity in the training dataset, he also linked the Silicon Labs development kit to the project and captured some additional images. Images lacking any trash items were also collected, so that misclassifications would not be made when nothing of interest was present in the image frame.

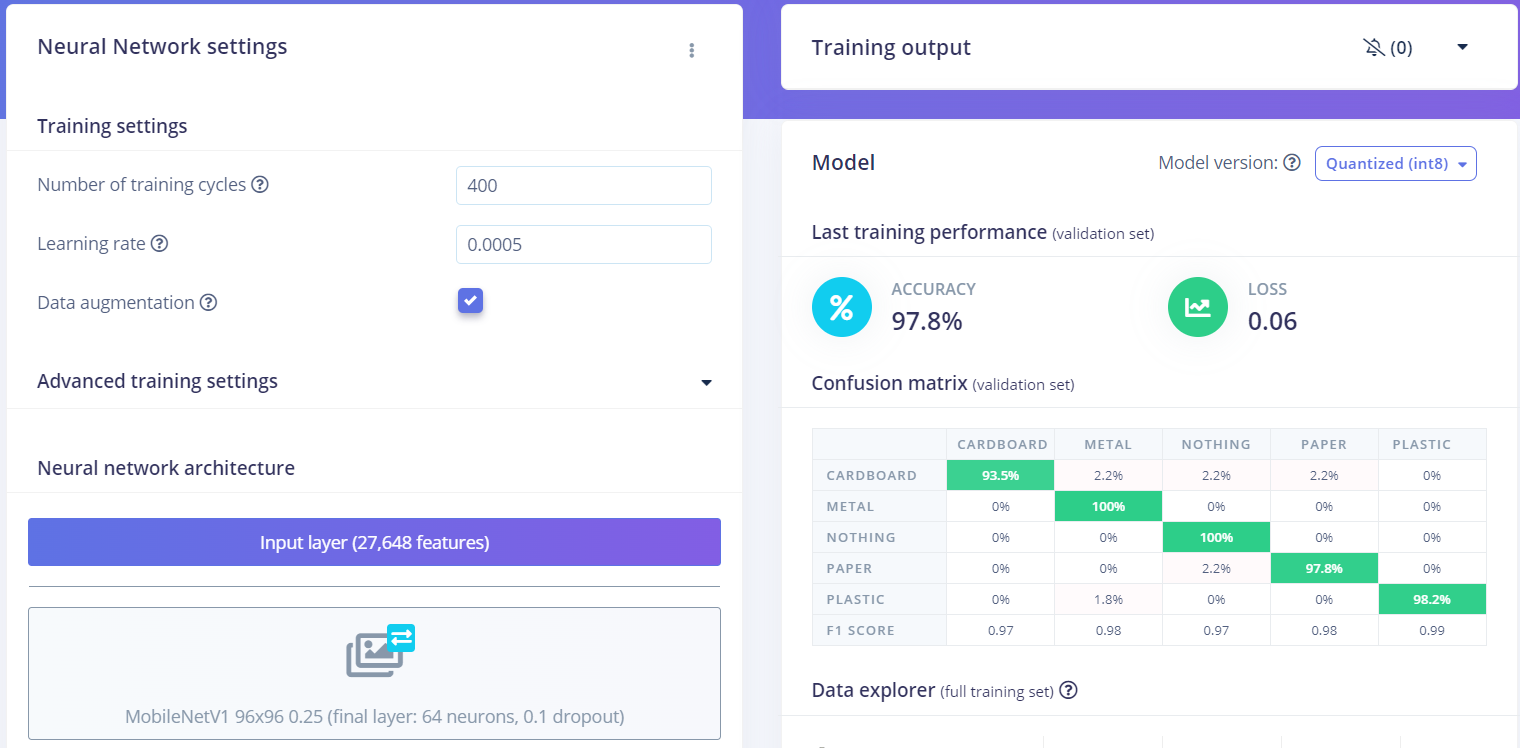

At this point, the stage was set for building the impulse — this defines the workflow that processes data all the way from the raw sensor measurements to the classification prediction. This impulse started with preprocessing blocks that reduce the size of the input image and prepare the data for input into a neural network. Decreasing the image size is critical, because it reduces the computational resources that will be needed by downstream algorithms and allows them to run on resource-constrained hardware platforms. This transformed data was then fed into a pretrained MobileNet V1 model. Leveraging the existing knowledge in the pretrained model allowed Vikstrom to achieve a high degree of classification accuracy after training on a small dataset.

A few versions of the model were trained and compared, and it was found that a final layer with 64 neurons gave the best result. This model yielded an average classification accuracy of 97.8%, which is quite good, especially when considering that it was trained on a small dataset. The more stringent model testing tool was also leveraged to validate that the training accuracy results were not simply the result of overfitting the model to the data. This tool uses data that was held out of the training process, and revealed that the classification accuracy had reached 100%. There is no topping that, so Vikstrom moved on to the deployment of the model so that it could finally be put to work on a real problem.

The Silicon Labs xG24-DK2601B EFR32xG24 Dev Kit is fully supported by Edge Impulse, so deployment was as easy as downloading prebuilt firmware image for this board from the deployment tab, then flashing it to the hardware. This firmware has the full machine learning pipeline embedded within it.

The prebuilt firmware will continually classify images and transmit the predictions over a serial stream of data. A Python script was also developed that reads this serial data and uses the predictions to control the actions of the Dobot robot arm. When a new item is detected, it will reach down to pick it up with a suction cup, then place it in a pile with other objects made of the same type of material. Vikstrom noted that in his testing the system worked quite well — only a few test cases were misclassified.

This proof of concept may not be quite ready for a job in a recycling center without a bit of refinement and scaling up, however, going through the process of building it is a fantastic crash course in image classification. We recommend that you start with Part 1 of the tutorial to get your feet wet with a playing card classification example, then make your way over to Part 2 to sort recyclables after you have picked up a few new skills.

Want to see Edge Impulse in action? Schedule a demo today.