To build smaller and better performing embedded machine learning models we heavily leverage signal processing to clean up data and to extract features from sensor data before even applying machine learning. This leads to more efficient and better explainable ML models as common signal processing algorithms are well understood, easy to debug, and can often be calculated very efficiently in hardware. After the signal processing step we can then use much smaller machine learning models to do the final classification.

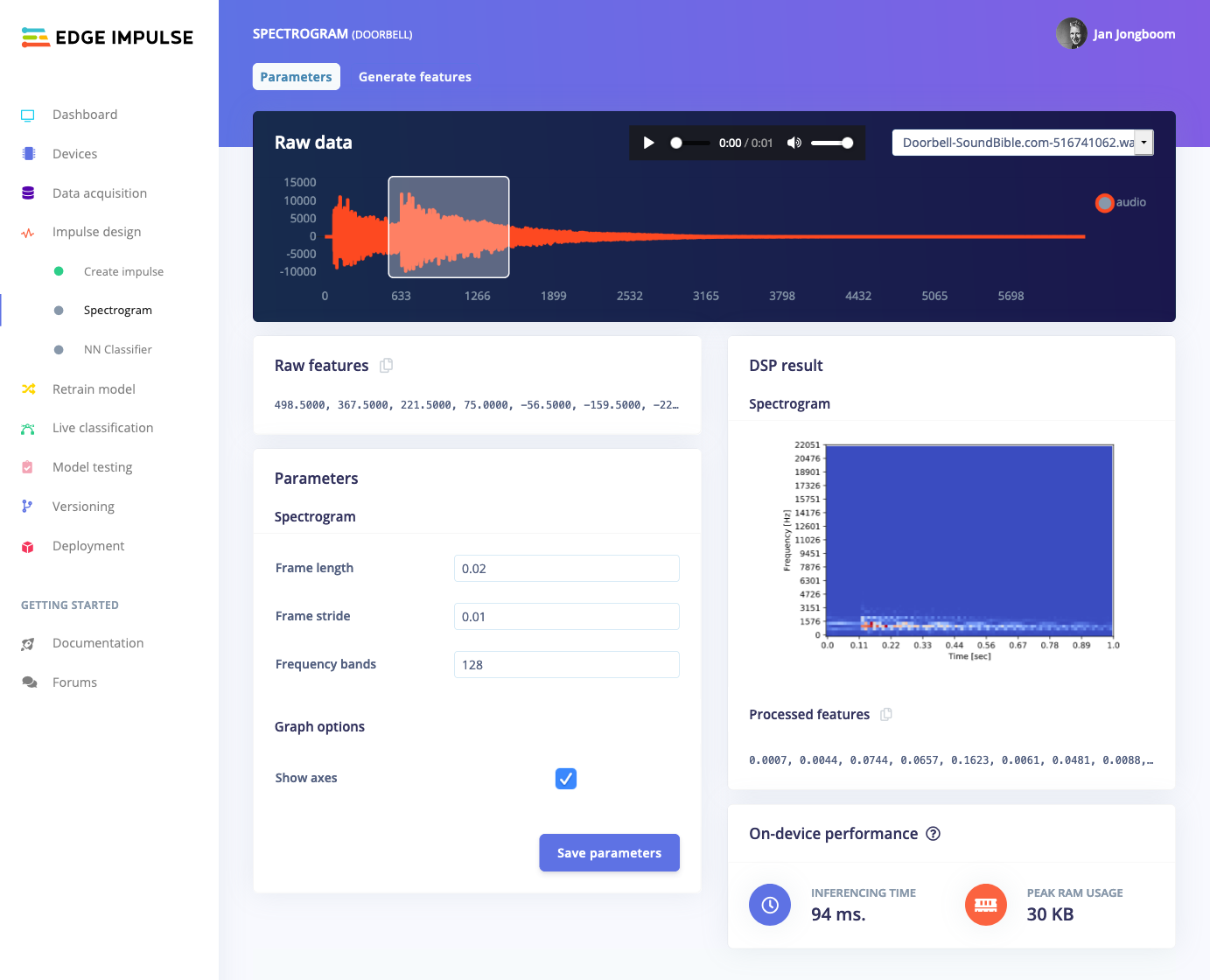

To make building models that operate on time-frequency domain data - like audio or moving machines - easier we have now introduced the new spectrogram processing block in Edge Impulse. This block is great for detecting non-voice audio - like a bell ringing, or detecting when a faucet is turned on - or for detecting non-continuous vibration patterns - like a machine making unexpected movements.

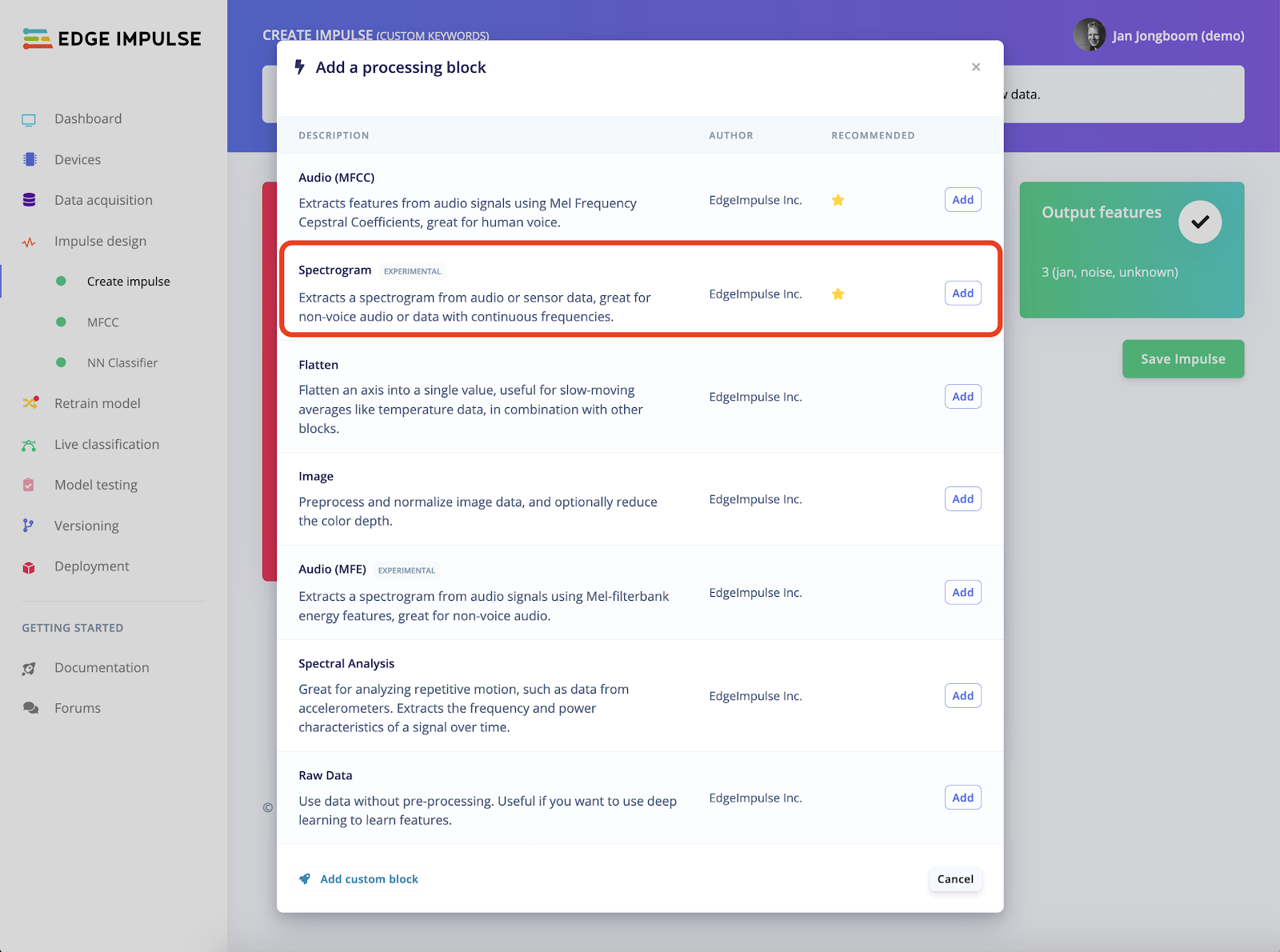

The spectrogram block is an addition to our existing set of processing blocks - which include spectral analysis (for continuous motion) and the MFCC and MFE blocks (for human speech) - and naturally integrates with all features in the Studio like the feature explorer and data augmentation, runs efficiently on devices (typically under 100ms. on a Cortex-M4F), and is compatible with continuous audio sampling for realtime classification.

To start using the new block, head to your Edge Impulse project, go to Create Impulse, choose Add a processing block and select Spectrogram. If you already had a neural network block in your impulse you might want to remove and re-add it, to make sure a proper architecture is loaded.

And that’s it! Interested in building a project using the new spectrogram block? Check out our tutorial on Recognizing sounds from audio. The new spectrogram block offers an 8% improvement in accuracy on this model - so well worth trying it out.

-

Jan Jongboom is the CTO and cofounder of Edge Impulse. He loves any processing block that has pretty visualizations.