With the blistering pace of recent technological progress, it feels like everything in the world is changing faster than we can keep up with. In the world of AI, for instance, we have moved beyond chatbots to agentic systems that can independently plan and execute complex workflows, while physical AI is bringing this intelligence into the world through robots that learn through observation. Medicine is also seeing a radical shift as foundation models for diagnosis allow doctors to detect diseases earlier than ever before, alongside the emergence of brain-computer interfaces that enable neural control of digital devices.

Not everything is changing so fast, however. If you need a reminder of that, look no further than the nearest big city. Traffic congestion, a lack of parking spaces, public safety issues, and infrastructure management problems still plague urban areas around the globe. Smart city technologies are supposed to solve many of these problems, and they could, if only there were more practical and economical solutions available.

Park It Right There!

Jallson Suryo lives in one of the largest cities in Indonesia, and has frequently been frustrated by a lack of available parking spaces and inefficient parking management. As someone who is constantly immersed in technology, he has been considering ways to address this problem intelligently. He believes that with the right combination of hardware and software, cities could significantly improve their parking woes without blowing their budgets. To prove this, he has built a proof of concept system to demonstrate what is possible.

Suryo has developed a vision-based smart parking meter designed to automate on-street parking enforcement. Traditional parking enforcement relies heavily on static signage, time-limited meters, and periodic checks by human attendants. This approach is not only labor-intensive, but also prone to gaps in enforcement, inconsistent compliance, and lost revenue. Cars overstay their allotted time, park in restricted zones, or avoid payment entirely, often because no one is watching closely enough. Suryo’s system instead watches continuously, using edge AI to understand parking behavior in real time and apply rules automatically.

Designing a Smarter City

The hardware platform chosen for this project is the Thundercomm Rubik Pi 3, a compact single-board computer built around a Qualcomm Dragonwing 6490 SoC with dedicated AI acceleration. Compared to more common maker platforms, the Rubik Pi offers a better balance of performance and power efficiency, making it well suited for always-on, vision-based applications. The remainder of the setup is very modest: a USB webcam, a USB-C power adapter, and optional active cooling for the Rubik Pi. For development and demonstration purposes, Suryo used miniature cars and a small street parking diorama.

On the software side, the Rubik Pi runs Ubuntu 24.04 rather than the default Yocto-based Linux. This choice simplifies development by providing access to standard package managers, modern Python, and full OpenCV and GStreamer support. Once the operating system is in place, the Edge Impulse Linux CLI and Python SDK become the key tools that tie the hardware to the machine learning workflow. This combination allows models trained in the cloud to be deployed efficiently on-device, taking advantage of the Rubik Pi’s AI hardware without requiring deep expertise in low-level optimization.

Building a Computer Vision System

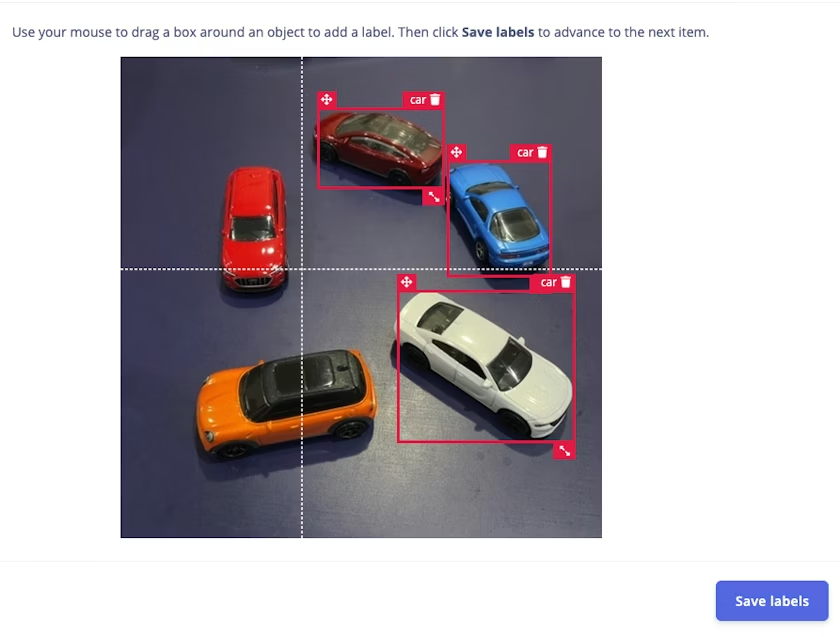

As with any computer vision system, data collection is the foundation. To train a parking-aware model, Suryo needed images of cars in various positions, angles, and lighting conditions that resemble real street parking scenarios. Edge Impulse Studio provides a flexible data acquisition pipeline, allowing images to be uploaded directly from a local machine or captured live from a connected device. By running the Edge Impulse Linux client on the Rubik Pi itself, the system can stream camera images straight into the project, ensuring that the training data closely matches the deployment environment. This device-in-the-loop approach reduces surprises later, when models often fail because training data does not reflect reality.

Once collected, the images are labeled using bounding boxes to mark the presence and location of vehicles. While manual labeling is still part of the process, Edge Impulse’s tooling helps streamline the task with features such as AI-assisted auto-labeling. After labeling, the dataset is split into training and testing subsets, typically following an 80/20 ratio, to allow for meaningful evaluation of model performance.

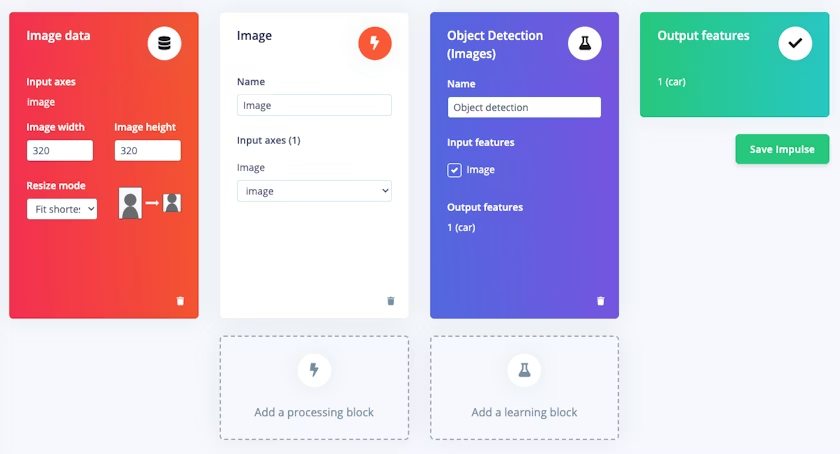

Model creation and training take place entirely within Edge Impulse Studio. For this project, Suryo selected a YOLO-Pro object detection architecture, configured with an input resolution of 320 by 320 pixels. By leveraging transfer learning and pre-trained weights, the model can achieve strong accuracy even with a relatively small dataset. Training parameters such as learning rate, number of cycles, and model size are exposed through an intuitive interface, allowing rapid experimentation without writing custom training code. GPU-backed training further shortens iteration time, enabling developers to refine their models quickly.

Despite limited training data, the model achieved perfect precision scores during validation and testing. Edge Impulse’s built-in testing tools make it easy to run the trained model against unseen images and visually inspect detections, providing confidence before deployment. This tight feedback loop—from data to model to evaluation—is one of the platform’s biggest strengths, particularly for edge AI projects where resources are constrained.

Deploying the System

With a few commands, the trained and quantized model is compiled into an optimized binary tailored for the Rubik Pi’s Qualcomm AI architecture. The Edge Impulse Linux Runner handles downloading and executing the model, exposing a live inference stream that can be viewed in a browser. On the Rubik Pi, inference times of just one to three milliseconds are achieved, dramatically faster than comparable setups on Raspberry Pi hardware.

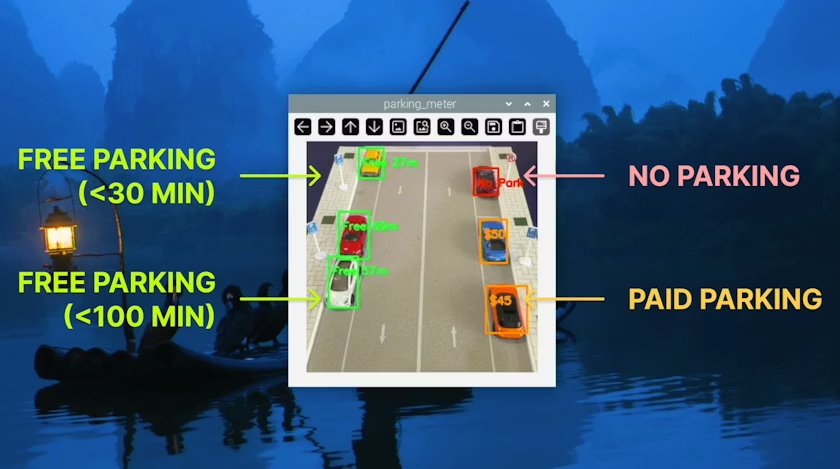

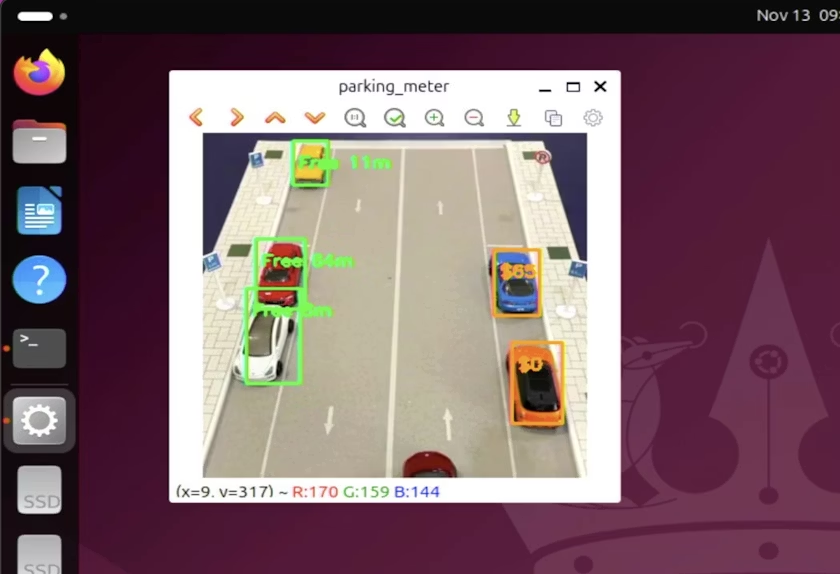

Building on top of this object detection pipeline, Suryo implemented a Python-based smart parking application. The software does more than simply detect cars; it tracks them over time. By comparing bounding boxes across frames using metrics such as Intersection over Union, object size, and distance between centers, the system can determine whether a car is stationary or has moved. Only when a vehicle remains still for several seconds is it considered parked, reducing false triggers from passing traffic.

Each detected car is then associated with a virtual parking zone, labeled A through D, each with its own rules. Some zones allow short-term parking, others prohibit stopping entirely, and one simulates paid parking by incrementing a displayed fee over time. Visual feedback is provided through color-coded bounding boxes and overlaid text, making violations immediately obvious. Although the time scales are compressed for demonstration, the logic mirrors real-world enforcement policies and can be adjusted easily.

In the end, this project demonstrates how far edge AI tooling has come. What once required specialized hardware, large teams, and deep expertise can now be achieved by a single developer using affordable components and a platform like Edge Impulse. By abstracting away much of the complexity of model training and deployment, Edge Impulse allows developers to focus on solving real problems rather than wrestling with infrastructure.

For cities struggling with parking management, systems like this could help bring about a future where parking enforcement is continuous, fair, and data-driven.

To learn more about how you could implement a system like Suryo’s in your city, be sure to take a look at the project write-up.