The dangerous workplaces of the early industrial revolution are a distant memory, with strong safety measures now in place to protect workers. And while these measures have made great strides in improving safety, there is still plenty of room for improvement. Each year there are 340 million work-related accidents worldwide — leading to 2.3 million deaths — according to the International Labor Organization. Many of these accidents take place either because a situation occurs that a machine safety system is unable to detect, or in the heat of the moment a worker is unable to activate a manual safety mechanism. In particular, safety systems and procedures have difficulty in dealing with slips and falls, falling objects, chemical burns, and workers being caught in moving machine parts.

A very interesting idea from engineer Solomon Githu may someday help to reduce the seriousness of workplace accidents by identifying them earlier. Recognizing that a person’s natural response to sudden trouble is screaming or calling out for help, he built a low-cost machine learning-powered device that can detect these events and trigger arbitrary actions, such as shutting down a machine or sending medical assistance. And with the simple method he chose to build the device — using an off-the-shelf development kit and Edge Impulse Studio — it could easily be deployed far and wide in workplaces around the globe.

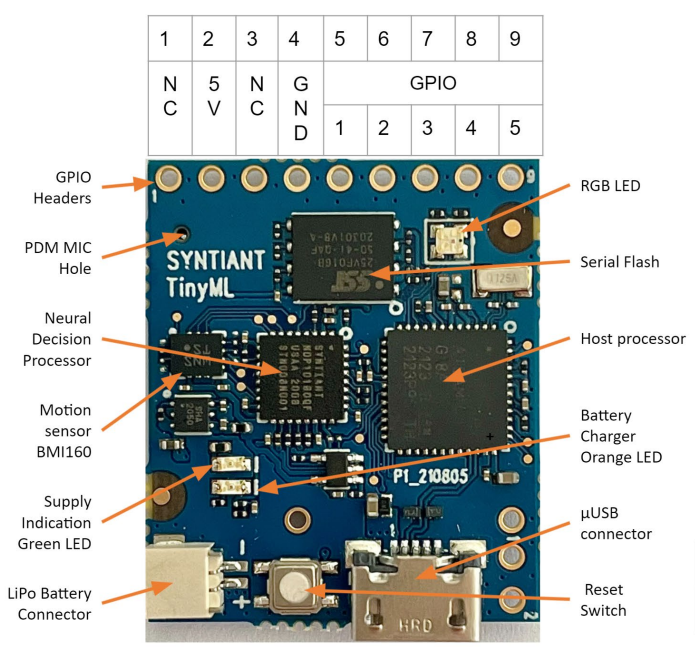

Githu found the perfect platform for his invention in the Syntiant TinyML Development Board. With the ultra-low-power Syntiant NDP101 Neural Decision Processor onboard, this device can perform neural network computations natively, making it ideal for classifying audio samples. And the 2 MB of flash memory and 32 KB of SRAM allow the board to run tinyML models locally, so that inference times can be minimized. The onboard microphone made Syntiant’s TinyML Board an all-in-one hardware solution. To handle the technical details associated with building, training, and deploying a machine learning classification pipeline, Githu turned to Edge Impulse Studio.

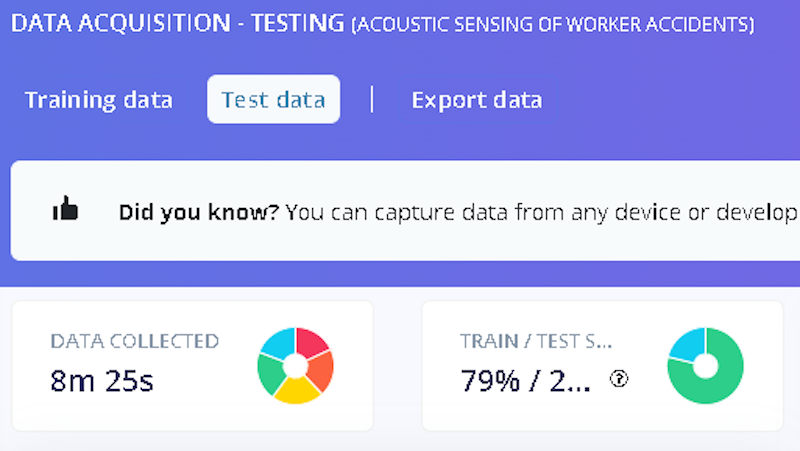

Before a model can be trained, example data will need to be collected. Githu tracked down data representing four different classes — cries, screams, calls for help, and yells of “stop.” Data for a fifth class of “other” was also collected to represent anything else. This consisted of people talking, machines in operation, vehicles, and all sorts of other common sounds. After getting together about 39 minutes of audio clips, they were uploaded to Edge Impulse for further analysis.

With the data all ready to go, Githu clicked his way to a complete machine learning audio classification model faster than you can say Jack Robinson. In Edge Impulse Studio, he created a new impulse consisting of an audio preprocessing step followed by a neural network. The preprocessing step extracts the most important features from the data to optimize the classification pipeline for resource-constrained devices. Next, the hyperparameters of the neural network were tweaked slightly for the task at hand, then the model was trained.

The data acquisition tool set aside a portion of the uploaded data to test the trained model for accuracy. This test revealed that the model achieved an average classification accuracy rate of 91.91%, which is very impressive considering the small amount of training data supplied. It would be expected that the accuracy would climb further by supplying more example data to the training process.

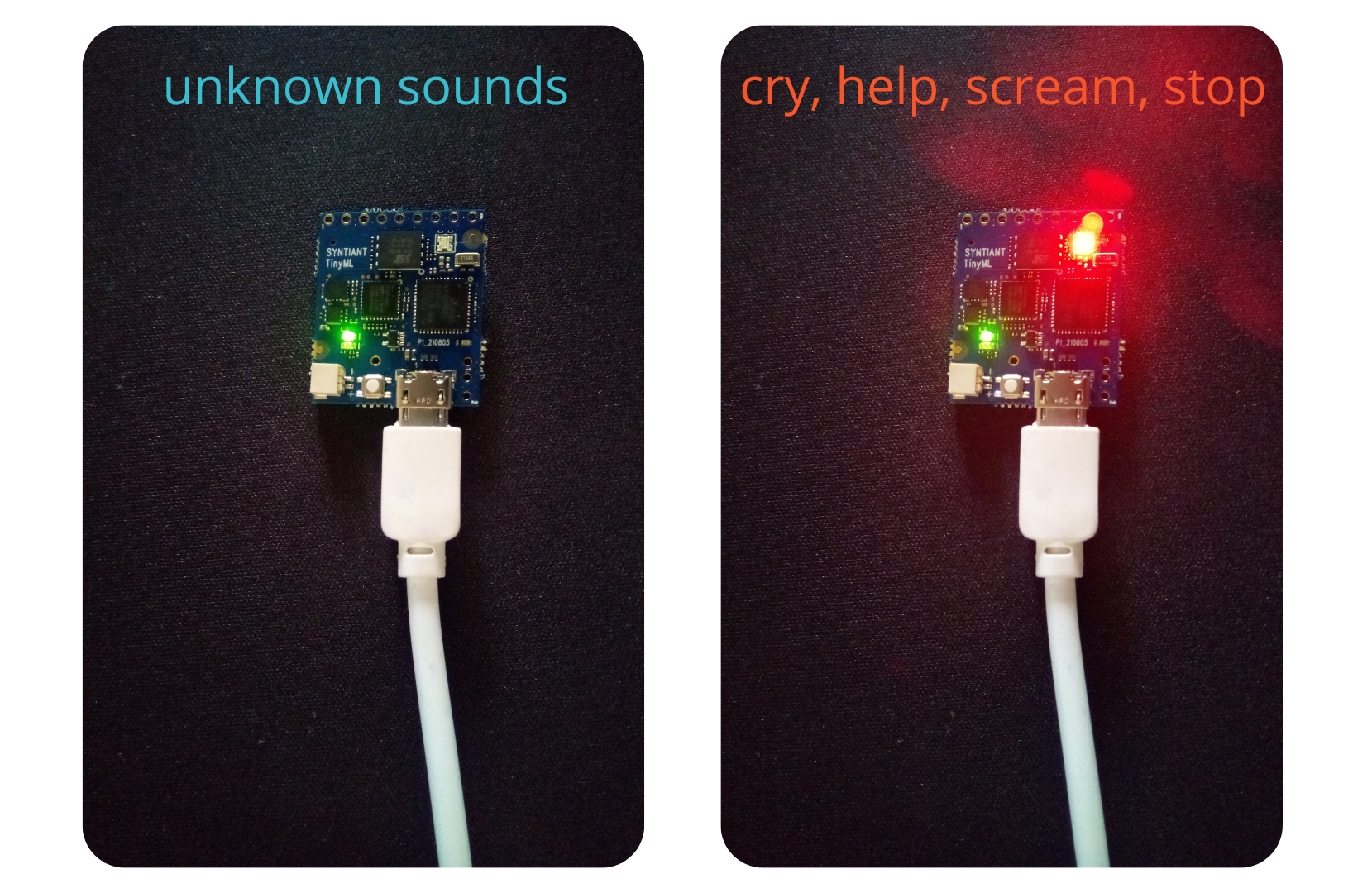

The Syntiant TinyML Board is fully supported by Edge Impulse, so deploying the model to the hardware was a snap. After clicking on the “Deployment” menu item, there is a “Syntiant TinyML” option under “Build firmware” that will package up a custom firmware image for download. The package contains a script that can be used to flash the image to the board via USB. After that has been done, the device’s onboard LED will flash red if any of the target sounds (cry, help, scream, stop) are detected. The light will remain green for all other detected sounds.

Before deployment in the real world, that firmware would need to be changed just a bit. Rather than flashing an LED, the GPIO pins on the Syntiant TinyML Board could be used to trigger just about any external action that is needed, like shutting down machinery. Githu demonstrated this with a WaziAct development board that has a relay used to actuate pumps, solenoids, or other electrical devices. He programmed it to shut down its main running task when it receives a signal from the Syntiant board indicating that a target word has been detected. With this example, it is easy to imagine how the device could be used in a production environment.

Ready to explore how you can keep workers safe on a tiny budget? Grab your hardhat and clone Githu’s Edge Impulse project, then read up on the documentation to help you get started on your own creation.

Want to see Edge Impulse in action? Schedule a demo today.