TinyML models, especially those created using Edge Impulse Studio, are very efficient in terms of processing time. Single inference processes may be done in single milliseconds or tens of milliseconds. Nevertheless, in some critical systems, even such a short processing time that will block other CPU activities, is still too long. To get rid of this problem, the multicore microcontroller can be used, so one core can be employed to make ML operations while another core can take care of other critical operations without any blockers.

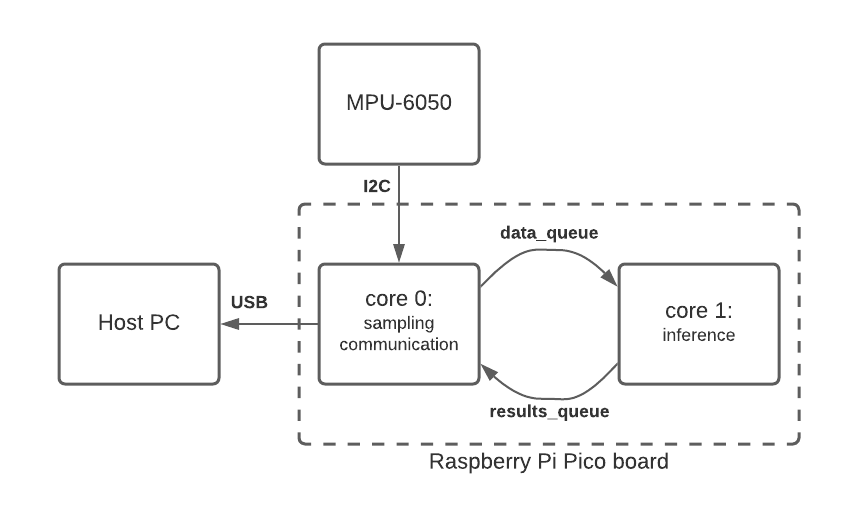

In this example, we will show how to use the Raspberry Pi RP2040 MCU, which consists of two Cortex-M0+ cores. Both cores can be utilized by the user for any tasks, while the interprocessor communication will be handled by shared memory and data queues. As an input sensor, the MPU-6050 IMU will be used for recognizing a few types of movement.

In the source code, you can find a code that is running on the core 0 (main function) and the code that is running on the core 1 (core1_entry). The data between cores are exchanged using two queues: data and results.

How do I get started?

First, get the Raspberry Pi Pico board, MPU-6050 module, breadboard and a few cables. Connect everything as shown below.

- Set up the Pico SDK.

- Clone the Multicore Pico Example repository.

- Go to Edge Impulse Studio and clone the Continuous motion recognition project into your account.

- In the project, go to Deploy, select C++ library and build.

- From the downloaded archive, copy edge-impulse-sdk, model-parameters and tflite-model directories into Multicore Pico Example.

- Build the project as described in the README.

- Deploy firmware to the Pico board and see results on the serial port.

We are very excited to see what you build with the Raspberry Pi Pico and Edge Impulse. Please post any questions you have and any projects you create over on our forum or tag @EdgeImpulse on our social media channels!