Deploying machine learning (ML) models on microcontrollers is one of the most exciting developments of the past years, allowing small battery-powered devices to detect complex motions, recognize sounds, classify images or find anomalies in sensor data. To allow any developer to build these models, and deploy them on real hardware Microchip has added support for Edge Impulse to their MPLAB X IDE. This allows developers to collect data from real devices, train machine learning models, and deploy to any Microchip Arm® Cortex®-based 32-bit microcontrollers and microprocessors.

While TinyML is exciting it can also be a complicated endeavor. It requires high-resolution sensor data, an excellent understanding of signal processing, training and verifying machine learning models, and finally integration in your device firmware. With Edge Impulse and Microchip, this all becomes a lot easier.

By installing the machine learning plugin for MPLAB X, you can collect real-world sensor data from your real devices and send the data straight to Edge Impulse. From here you’ll have a cloud-based environment to explore your datasets, build feature extraction pipelines, build machine learning models, and then deploy your trained model (including all DSP and ML code) as C++ source code. You can then integrate the model into your applications with a single function call. Your sensors are then a whole lot smarter, being able to make sense of complex events in the real world.

To build a first project that can recognize gestures based on accelerometer data you can either grab the SAMD21 ML Evaluation Kit - with a Cortex-M0+ MCU and an IMU sensor; or quickly adapt your own firmware for any Microchip board:

- Sign up for an Edge Impulse account - it’s free for developers.

- Install MPLAB X, and add the Data Visualizer plugin via Tools > Plugins.

- In your firmware write your sensor data over UART, encoded using the MPLAB Data Visualizer format. The example project for the SAM-IoT is located here.

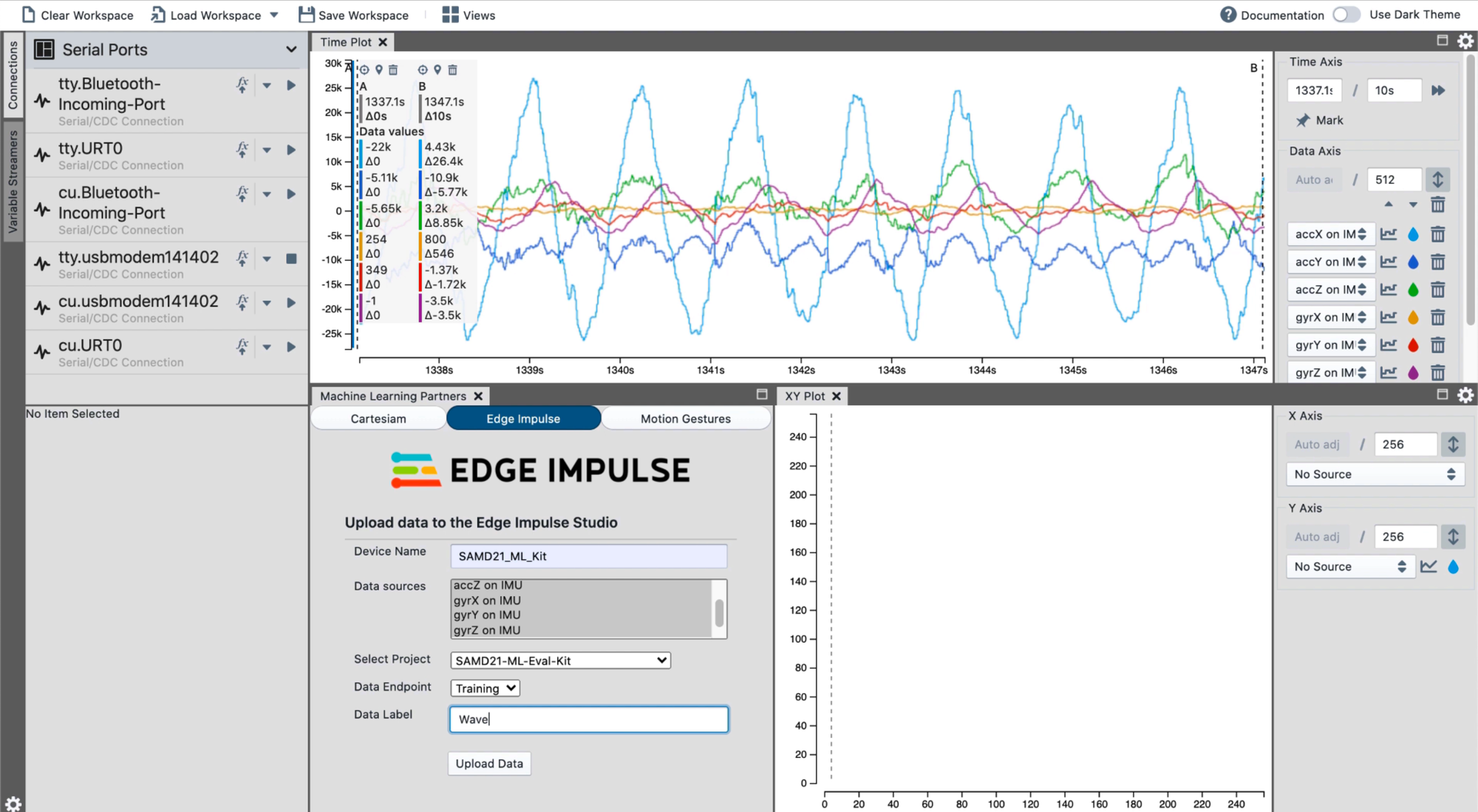

- Open the Data Visualizer, via Tools > Embedded > Data Visualizer.

- In the data visualizer under ’Serial Ports’ select your device, and follow the steps in this guide under Procedure.

- Under ’Machine Learning Partners’, select ’Edge Impulse’, log in, and set your project.

- Collect some data (it should show up under ’Time plot’) and select ’Mark’ to mark a selection.

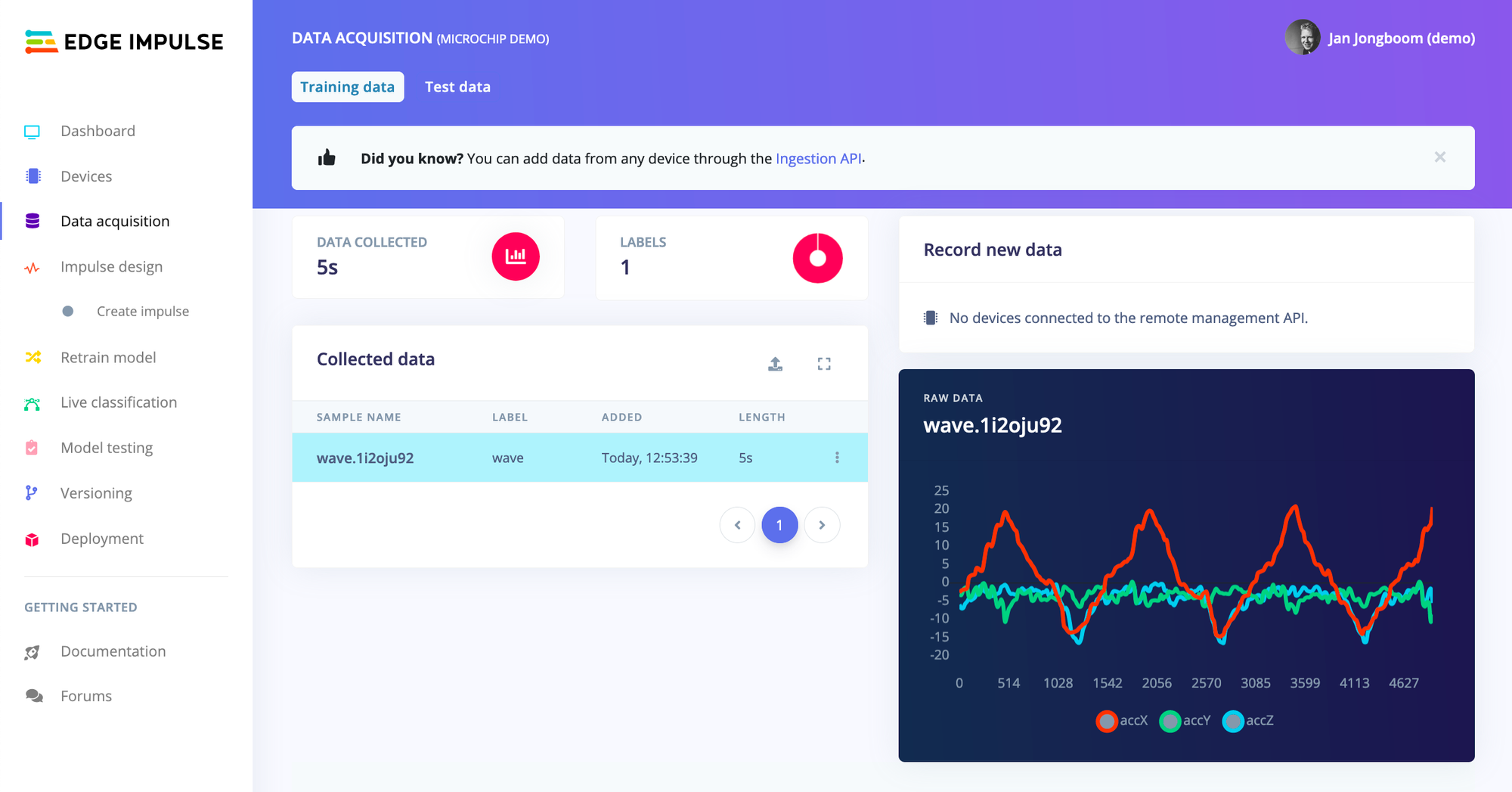

- Click Upload data and your sensor data will show up in Edge Impulse under Data acquisition.

- You’re all set up to build your first machine learning model. Follow this tutorial to really get started: Continuous motion recognition.

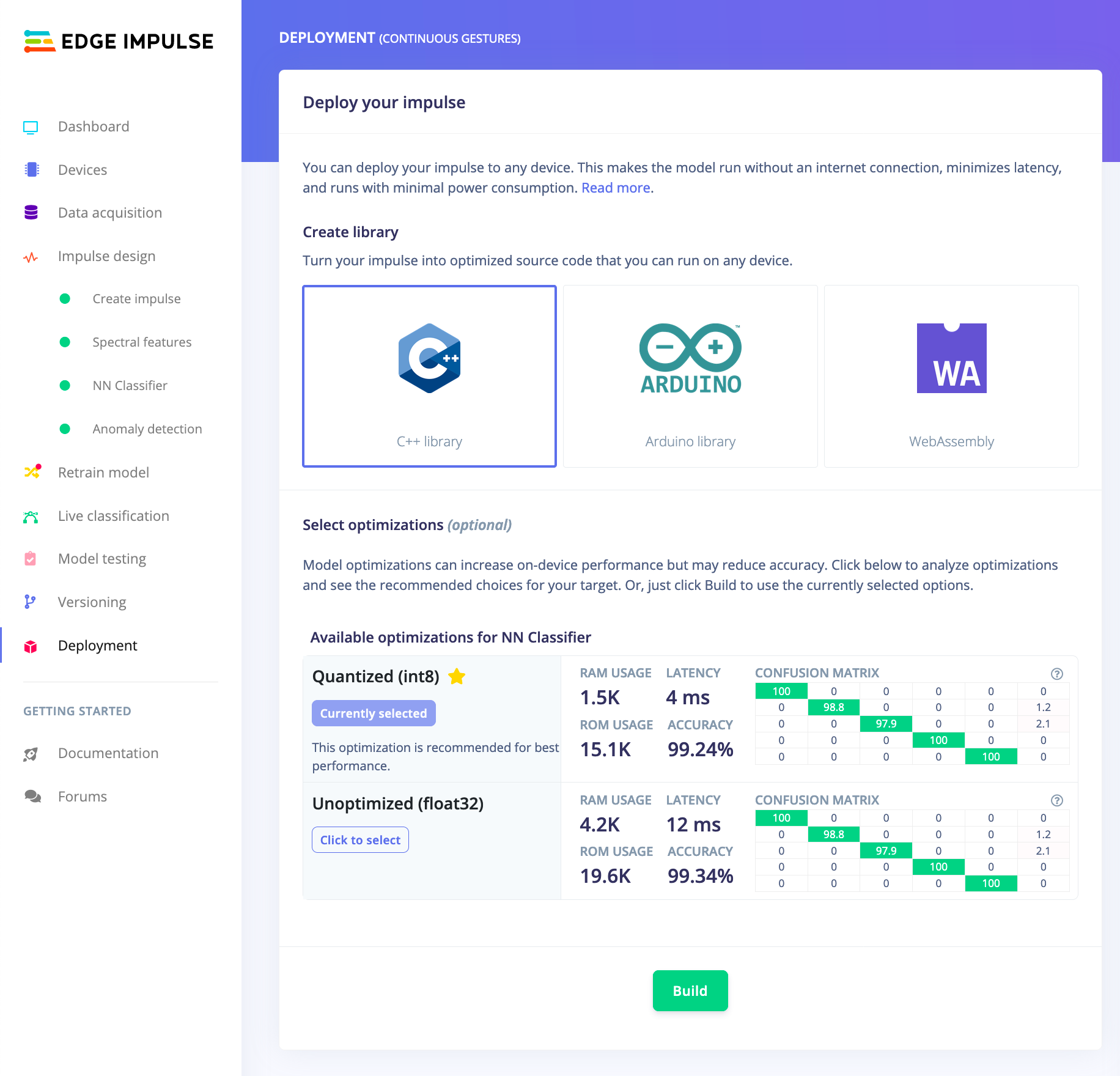

- When you’re done, export your project as a C++ Library.

- You now have a library that you can integrate with your firmware with a single call. Just grab some data from the IMU sensor, and call

run_classifierto get predictions back. Here’s an example with a Makefile. This library contains highly optimized math, and will even leverage the vector extensions on any Microchip MCU that supports it. Even the SAM-IoT with its Cortex-M0+ is fast enough to do real-time classification on accelerometer data - taking ~376ms. to analyze and classify 2 seconds of data.

And this is just the start of what you can do with embedded machine learning. We also have tutorials on recognizing sounds and adding sight to your sensors - and you can easily extend Edge Impulse by adding new signal processing blocks and custom machine learning architectures. Whether you’re looking to build a self-driving car, make a device that can sniff booze, or do chemical sensing we’ve got you covered! The resulting libraries will run on any Arm-based Microchip MCU (depending on the size of your models).

If you have any questions on the new Microchip integration or want to share an interesting project that you’re working on: let us know on the forums. We can’t wait to see what you’ll build!

Jan Jongboom is the CTO and co-founder of Edge Impulse.