In digital signal processing, downsampling takes high-resolution data recorded at a high sampling rate and compresses the data into a smaller bandwidth and sample rate. The original signal is passed through a low-pass filter, reducing the frequencies above and below a certain threshold and keeping only every few samples, creating an approximation of the signal at the lower frequency.

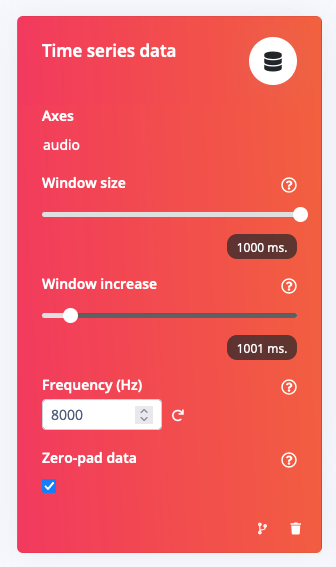

In edge AI, you always want the data at the highest resolution possible; however, you might not need super high-resolution sensor data for some use cases such as predicting machine failures or listening to keywords. Using lower resolution data is faster to compute, creating better battery life for your device while maintaining accuracy. To facilitate this, we have added a downsampling block to the Edge Impulse Studio.

Why is downsampling useful for embedded machine learning?

Suppose your use case doesn’t need the full resolution of your time series data. Downsampling enables you to create even smaller models since the machine learning algorithm doesn’t require as many training data points. For embedded AI, memory usage is vital; creating a smaller but still highly accurate model allows you to save space for other application code and processes on the device.

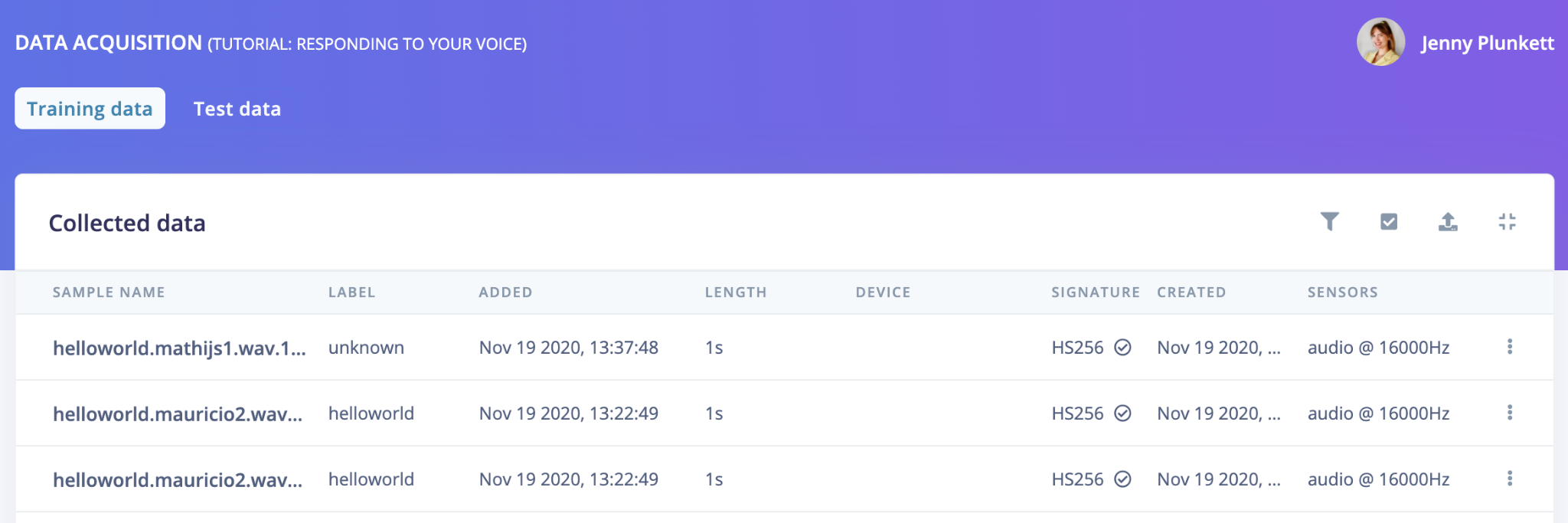

Downsampling is also helpful for model reusability between devices whose sensors record at different sampling rates. For example, I could record audio samples on my mobile phone at 16kHz and train a model using that data, but deploy to an embedded device like the Micro:bit with a microphone frequency of 11kHz. Downsampling my original data in Edge Impulse allows me to unify these data sources to the same frequency automatically and re-train my model to this new frequency without having to re-record my training/testing data.

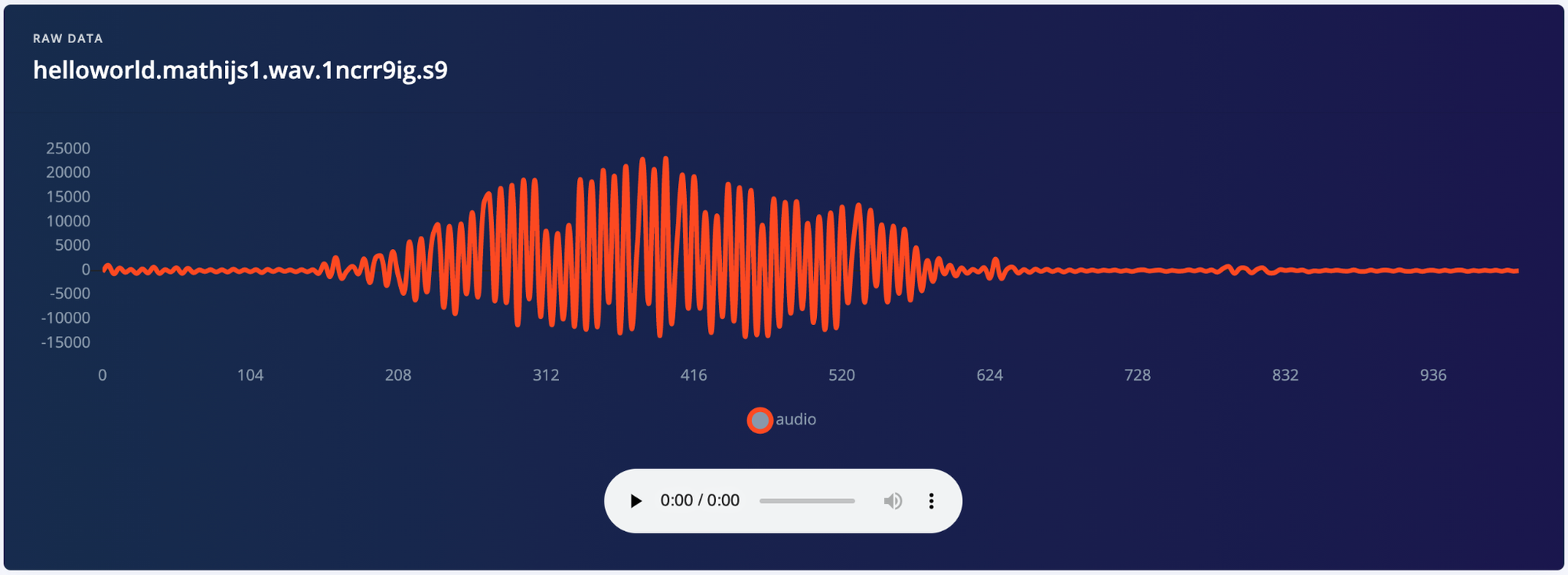

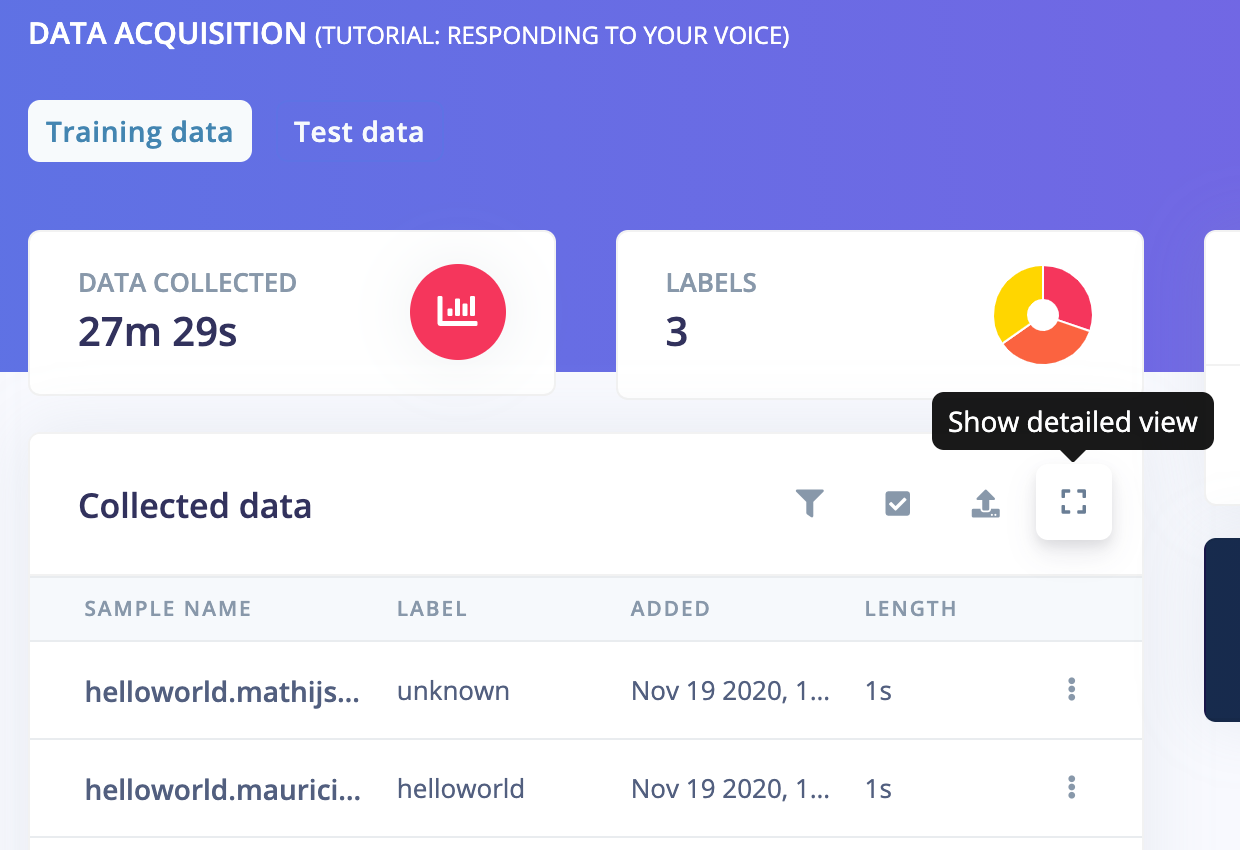

To view the frequency of your original data samples, select the “Data acquisition” tab of your Edge Impulse project and click the Show detailed view button:

The frequency of your time series data samples is displayed under the “Sensors” column at the far right:

What’s next?

Recently we launched Edge Impulse’s new AutoML tool, the EON Tuner. Currently, the EON Tuner is available for all of our users to generate a wide range of combinations of different parameters/layers for the digital signal processing and neural network blocks for audio use cases. Because downsampling time series data like audio is so valuable for decreasing the trained model size and increasing model reusability, soon the EON Tuner will also include a tunable parameter to generate models that utilize various downsampled frequencies automatically.