There are a thousand things that can go wrong in the process of manufacturing a complex product, and good quality control is the only thing standing between success and a recall that tarnishes a company’s image for years to come. These days, quality control systems are likely to be automated because of the superior speed, accuracy, and consistency they offer. The manual inspection processes of the past were plagued with problems, such as worker fatigue and an inconsistent application of standards, which led to higher rates of both false positives and missed defects.

Unfortunately, not everyone is able to benefit from the technological advances that have reshaped quality control, often due to the high initial investment cost or the need for highly specialized technical expertise to implement and maintain them. Small- and mid-sized manufacturing operations, in particular, often struggle to justify the expense of fully automated machine vision systems and must continue to rely on manual or semi-manual processes. This puts them at a distinct disadvantage when competing with larger companies that can afford to invest in the best available quality control systems.

Engineer Naveen Kumar has a lot of experience innovating with machine learning tools and applications, so he knows these algorithms have the potential to act as a great equalizer. If they are applied to a problem with care, the solution need not be expensive or overly complicated. In fact, Kumar believes that a small manufacturer could prototype a computer vision-powered quality control system in a matter of hours, using no more than a few hundred dollars in parts. To prove it, he put his PLC where his mouth is and built a custom industrial sorting system that could be used to separate good products from defective ones on a production line.

Honey, I shrunk the factory

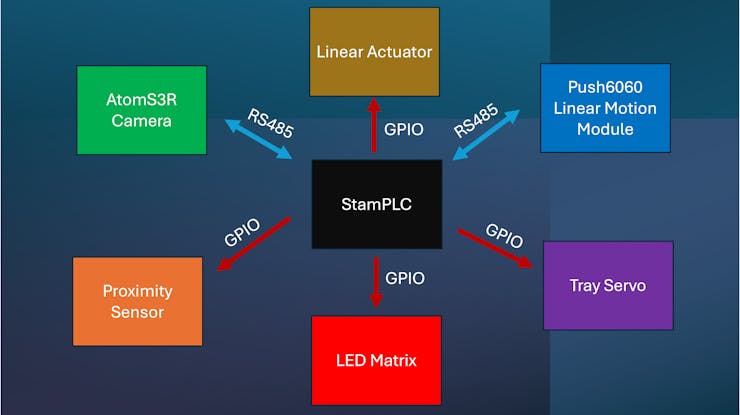

To fully test out his ideas, Kumar created a miniature factory floor. The central nervous system of the operation is the M5Stack StamPLC, a Programmable Logic Controller (PLC) based on the ESP32-S3 microcontroller. This manages the real-time operations of the entire line. For the system's "eyes," Kumar selected the M5Stack AtomS3R-CAM. Also driven by an ESP32-S3 microcontroller and equipped with 8MB of PSRAM, this tiny camera is capable of efficient, on-device AI model processing.

To make a complete sorting system, the controller would need to physically move objects on command. For this reason, Kumar stacked colored blocks (representing products) in an aluminum feeder tube. A Pololu IR proximity sensor detects when a block is ready, signaling a Kitronik linear actuator to release it onto the track. The product is then moved smoothly under the overhead camera by an M5Stack 6060-PUSH Linear Motion Control Module. Communication between the main PLC, the camera, and the motion module is handled via the RS485 industrial serial standard. Finally, based on the camera's judgment, a servo tilts a custom 3D-printed tray to sort the block into the correct bin, while an 8x8 LED matrix provides immediate visual feedback on the system's status.

With the hardware in place, Kumar needed a computer vision algorithm that could run in real-time on the ESP32 microcontroller embedded within the AtomS3R-CAM. Computer vision algorithms can be very computationally intensive, so the Edge Impulse platform was chosen to help build and optimize a model that would fit comfortably within the constraints of this chip. With the help of Edge Impulse, the goal was to train a model that could accurately distinguish between different colored blocks — specifically yellow and green — representing "pass" or "fail" criteria in a real-world scenario.

The first step in bringing this AI to life was data collection. Kumar connected the AtomS3R-CAM to a computer, where it functioned as a standard webcam. It was then linked directly to a project in Edge Impulse Studio. Next, Kumar captured hundreds of images of the yellow and green blocks. These images were taken under various orientations with differing background conditions and lighting. This variety is necessary to produce a robust model that will work well under real-world conditions.

I spy with my little AI

With the data collected, Kumar moved to the impulse design phase. An Impulse is a custom pipeline that defines how incoming data will be processed, from the moment of collection until a prediction is made. Because microcontrollers have limited computational resources compared to a desktop PC, the pipeline was configured to reduce the size of the input images. This has the effect of minimizing the computational resources needed in later steps of the impulse.

For the actual machine learning block, Kumar chose a specialized model architecture known as FOMO MobileNetV2. FOMO is Edge Impulse’s own approach for object detection on constrained devices. Unlike traditional object detection models that scan an image repeatedly, FOMO splits the input image into a grid and runs classification across the cells in parallel. This results in incredibly fast inference times suitable for real-time sorting.

The model achieved a 100% F1 score on the training data and maintained 100% accuracy during the testing phase, proving it could perfectly distinguish between the target objects. That is not entirely surprising, since differentiating between yellow and green blocks is not an especially challenging problem. However, with a sufficient training dataset, similar results would be achievable on more complex tasks as well.

To deploy the trained impulse onto the tiny AtomS3R camera, Edge Impulse’s EON Compiler was used to optimize model memory usage, alongside 8-bit quantization, ensuring the sophisticated model fit comfortably within the ESP32-S3’s memory constraints.

The logic behind the magic

The final piece of the puzzle was integrating this AI vision with industrial control. The StamPLC application was designed using Finite State Machine (FSM) principles. An FSM ensures reliability by defining distinct system states — such as IDLE, DETECT_READY, OBJECT_DETECTION, and SORTING — and the specific conditions that trigger transitions between them.

When the system detects a block, it moves it into position and the PLC sends a request via RS485 to the camera. The camera runs the on-device Edge Impulse model, determines if the block is yellow or green, and sends the result back. Based on this intelligence, the PLC instructs the servo to sort the product accordingly.

In the end, Kumar’s project successfully demonstrated that the barriers to entry for automated, AI-driven quality control are not as high as they used to be. With the right tools, a prototype can be developed on a very short timeline.

To learn how you can get started on your own system, take a look at Kumar’s project write-up.