Back in the days before the internet ate everything, there were print magazines for every interest — from video games to golf, to cooking, to celebrity gossip, practically all niches were catered for. Perhaps none better than automobiles, for which an immense array of publications existed, focusing on classic cars to off-roaders to cars you build yourself. And until 2014, one amongst the many that lined bookstore shelves was Import Tuner, “Living the fast life, in fast cars. Featuring the finest in tuned automobiles.” It was fun to flip through the pages, knowing deep down that you would probably never accumulate the skills or funds necessary to match the amazing examples displayed on Import Tuner’s glossy pages. For some, machine learning may seem similarly out of reach — the realm of PhDs and data scientists, in the same way that the 1600 horsepower cover car was the domain of elite tuners. But with Edge Impulse’s new EON Tuner, the barrier to entry for advanced model generation and selection just got a whole lot lower!

While Edge Impulse has always made it easy to gather data, generate and test models, and deploy them to hardware, that process still required a fair amount of expertise — or at least selecting and configuring blocks during impulse design without necessarily having a clear understand of what the ideal blocks or parameters might be for a given application. For the beginning machine learnist, there are quite a few selections to be made, for which it would be really handy to have a data scientist watching over your shoulder. Edge Impulse’s new EON Tuner effectively provides an always-on 24/7 data scientist in the cloud, ready to generate and evaluate dozens of solutions to your machine learning problem.

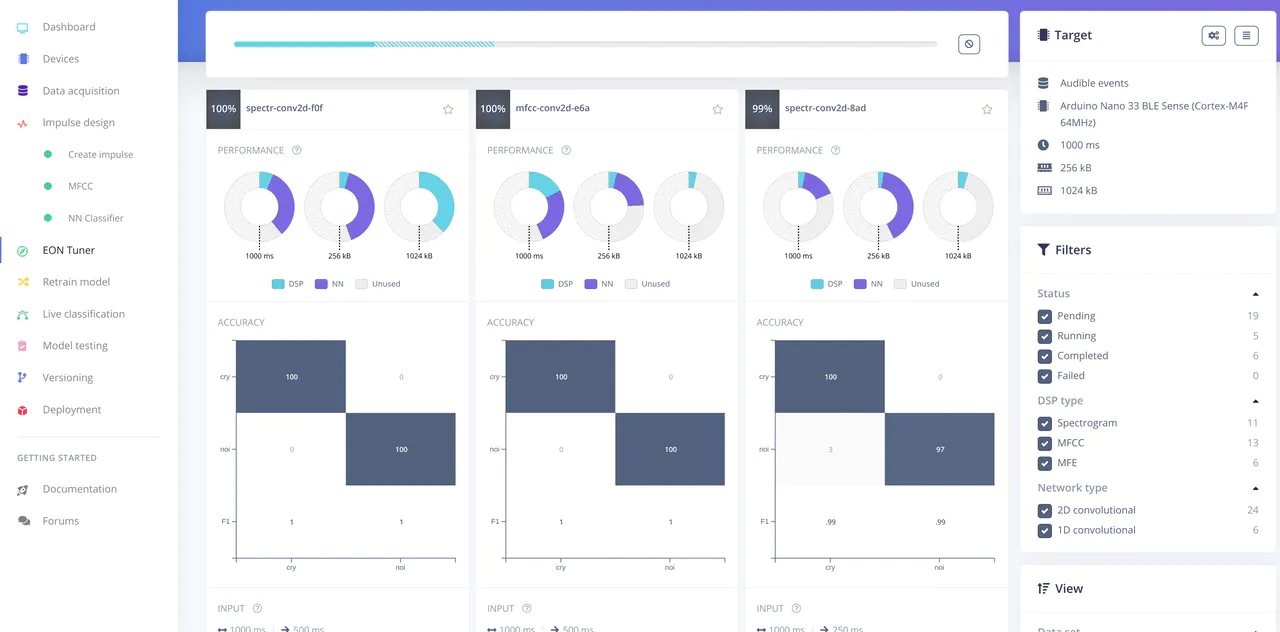

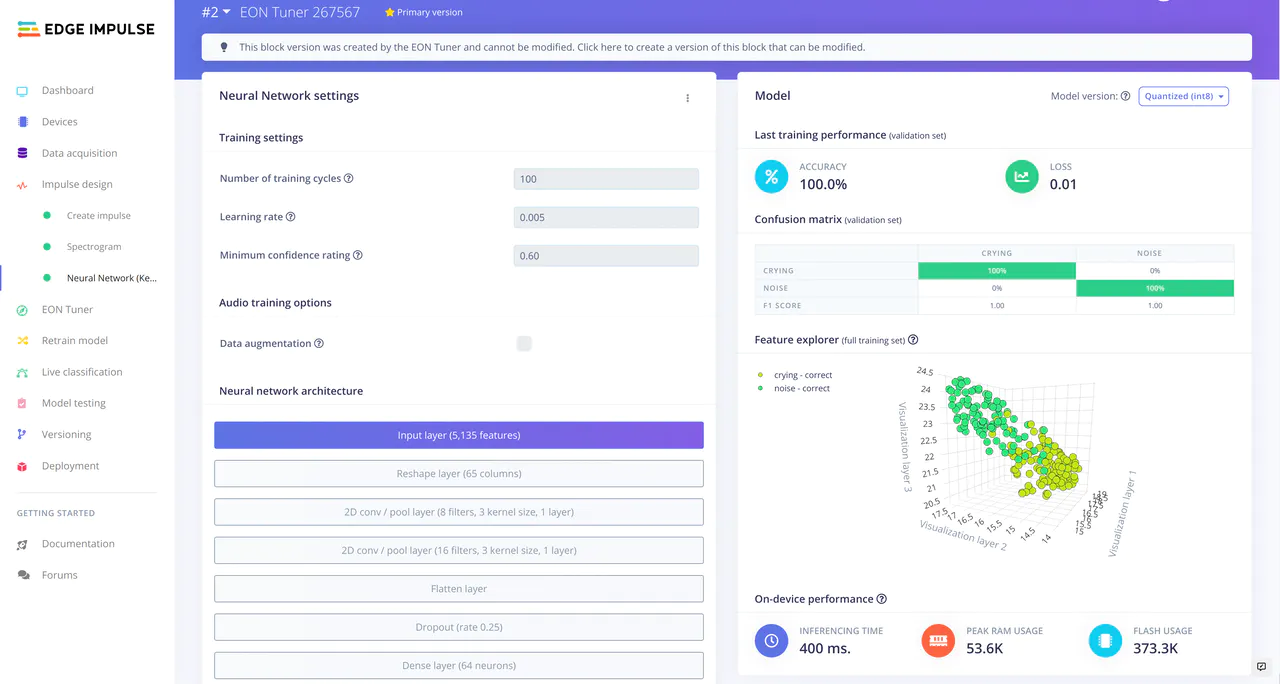

In order to evaluate the new functionality, I took a project from — wow, exactly a year ago: BABL, my machine learning-enabled baby monitor, to see what kind of improvements EON Tuner might bring to the table. Complete details of my experience can be found in my new BABL x EON project on Hackster, but the tl;dr is that with literally two button clicks — one to generate model variants, and one to select my preferred replacement for my original blocks, I improved classification accuracy from 86 to 100%!

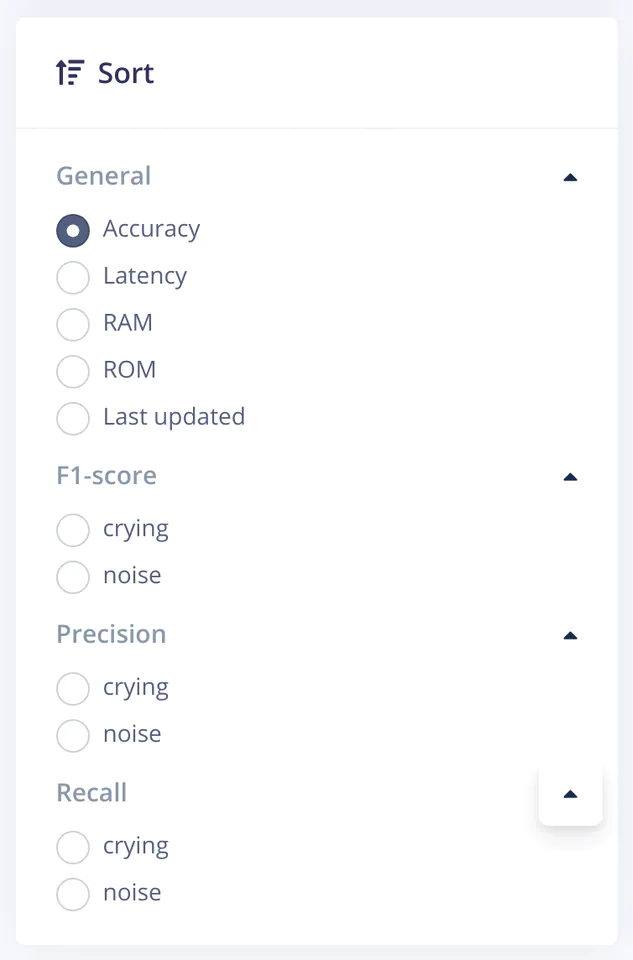

Certainly there is a lot more to EON Tuner than increasing accuracy 14% with one weird trick: you can filter results based on latency or RAM usage, or a particular classification. And since you can easily evaluate the new model right there in the browser with Live classification, it’s easy to audition different variants to find the best one for your application.

Of course, EON Tuner is not really magic — you should still evaluate the generated models, making judgement calls for yourself about the tradeoffs between accuracy, latency, and resource usage — but it sure beats poking around in blocks and typing in your best guess about the number of required epochs by hand. If only real life were this easy — maybe I’d be sitting in my dream twin-turbo RB26DETT R34 Skyline with bosozoku pipes right now!