We’ve all grumbled about cyclists being on the road in front of us at one time or another. When you’ve got somewhere you need to be, the last thing you want is to be stuck behind a bicycle. But just imagine how the cyclists feel! All they want to do is get a little exercise, but they have to constantly watch over their shoulders to make sure drivers whipping by at 50 miles per hour aren’t too distracted to notice them.

Sadly, drivers all too often do not give cyclists the attention they deserve. According to NHTSA data, around 45,000 injuries result from cars colliding with bicycles each year in the US alone. It is by no means a fair fight, either—the injuries sustained are frequently very serious. Accordingly, any protective measures that can be put into place would be very welcome.

As a cyclist, Manivannan Sivan recognized that one area that could make a big difference is communication. Before making a turn, cyclists signal with an arm gesture to alert nearby motorists. However, many drivers are not familiar with these signals, so they do not have the desired effect. Moreover, taking one’s hand off of the handlebar, especially at high speeds, could in and of itself cause a crash.

A Hands-Free Vision for Safety

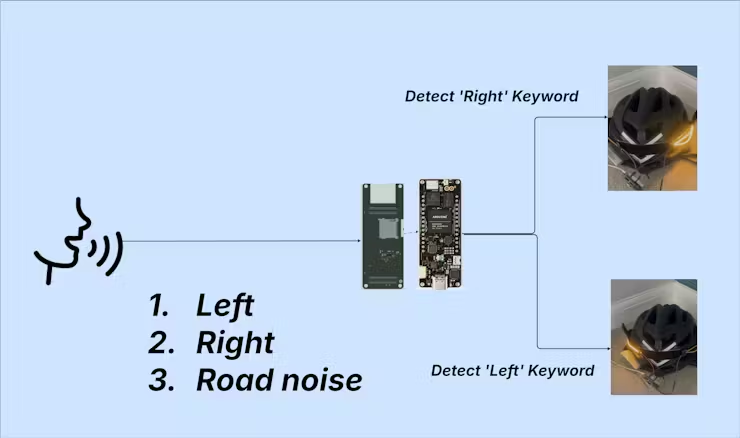

Sivan believes that a more universal sign, like a blinking turn signal, would be instantly recognizable to drivers. That should help to break down the present communication barrier, but to prevent wiping out from triggering the signal, another component was needed. The trigger would need to be hands-free, so that one’s hands never need to leave the handlebar. Sivan’s idea was to use voice control to signal a left or right turn.

All bikes are different, so rather than attempting to accommodate them all, Sivan came up with the clever idea of integrating this safety system into a helmet, and that is exactly what he did. This solution does present one notable challenge, however: all of the hardware has to be small and light enough to comfortably rest on one’s head. To make this possible, Sivan turned to Edge Impulse and Arduino.

By using the Edge Impulse platform, Sivan shrunk an AI-powered voice recognition model down to a tiny size that uses minimal computational resources. This also minimizes energy consumption, which is an important factor when working with portable, battery-powered hardware. Even so, that hardware would need to be energy-efficient and up to the task of running a machine learning algorithm, so Sivan chose to work with the powerful Arduino Portenta H7. This was paired with a Portenta Vision Shield to add a microphone, and a powerbank was also included to keep everything up and running on the go.

Building the Intelligence

Sivan’s plan was for the Arduino to continually capture audio samples with the microphone. These samples would then be analyzed by a machine learning classifier created with Edge Impulse. When that classifier recognized the sound of the cyclist saying ‘left’ or ‘right,’ it would trigger the Arduino to flash a flexible LED filament positioned on the rear of the corresponding side of the helmet.

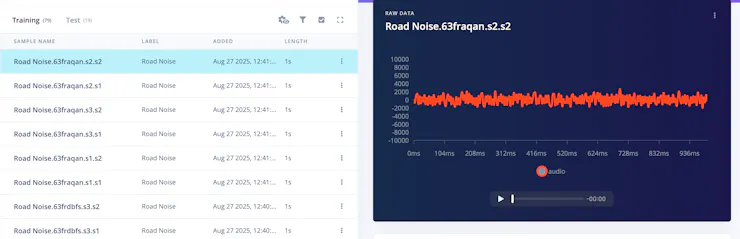

To bring this plan to life, Sivan first collected a dataset consisting of audio files of him saying ‘left’ and ‘right’. Normal road noise was also collected to prevent false positives from triggering the turn signals. This process was greatly simplified by first installing Edge Impulse firmware onto the Portenta H7. This linked the hardware to an Edge Impulse project, and automatically uploaded the dataset as it was collected.

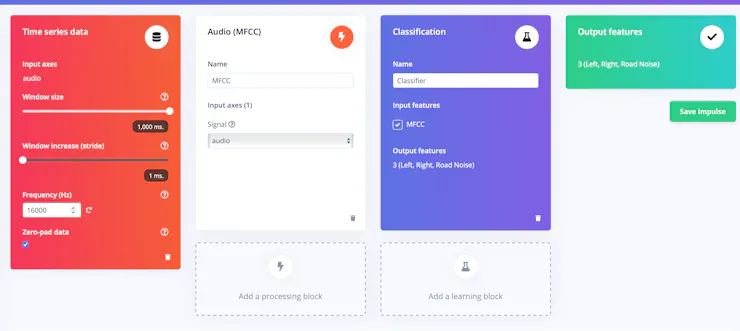

Next up, Sivan created an impulse — this is the set of processing steps that takes the raw sensor data and turns it into a prediction from a machine learning model. In this case, the impulse first splits incoming audio into one-second windows. The audio from each window is then preprocessed by an Audio MFCC block. This serves the purpose of extracting the most informative features from the data, and it is especially useful in processing voices. Finally, Sivan fed the features into a neural network classifier that can distinguish between the commands ‘left’ and ‘right,’ and normal road sounds.

From Prototype to Real-World Application

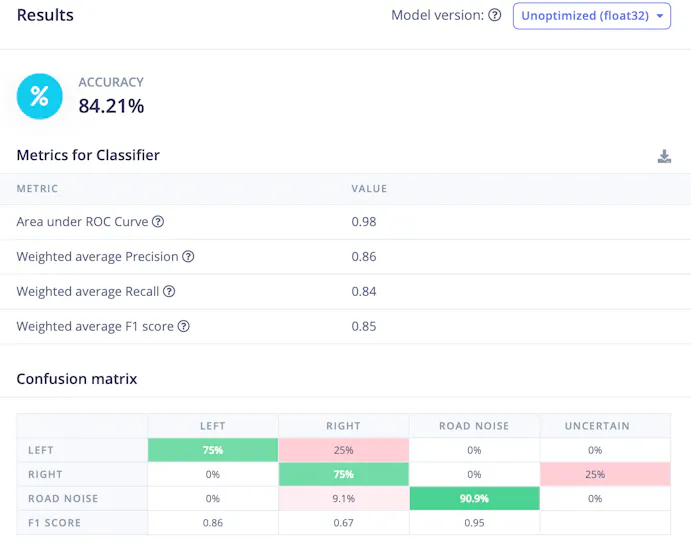

Before wrapping it up, Sivan adjusted the learning rate and the number of epochs of training to run. He also tweaked the architecture of the neural network, including a 1D convolution layer and a dropout layer with the goal of improving the model’s accuracy. With those tweaks out of the way, the training process was initiated with a button click.

Once complete, summary metrics were displayed to help Sivan evaluate the performance of the model. The training accuracy was reported as 100%, so he also checked the model testing tool to see if it would confirm that excellent result. While not as high, the accuracy was reported as being over 84%, so it was good enough to move forward with for the prototype. This discrepancy is an indication that a larger training dataset may be needed before a production deployment, however.

Speaking of deployment, Sivan chose to load the impulse onto the hardware by downloading it as an Arduino library. This is one of the most flexible options available. It makes it simple to add your own code that is triggered based on the inferences made by the model. In this case, Sivan used the results to blink the LED filament as requested by voice commands.

While Sivan focused on solving a big problem in this project, the same basic principles used to voice-control a helmet turn signal could be used to do everything from turning on a lamp to adjusting a thermostat.

Now that you know how it works, what will you build? If you need a few more pointers before you are ready to get started, be sure to take a look at Sivan’s project write-up.