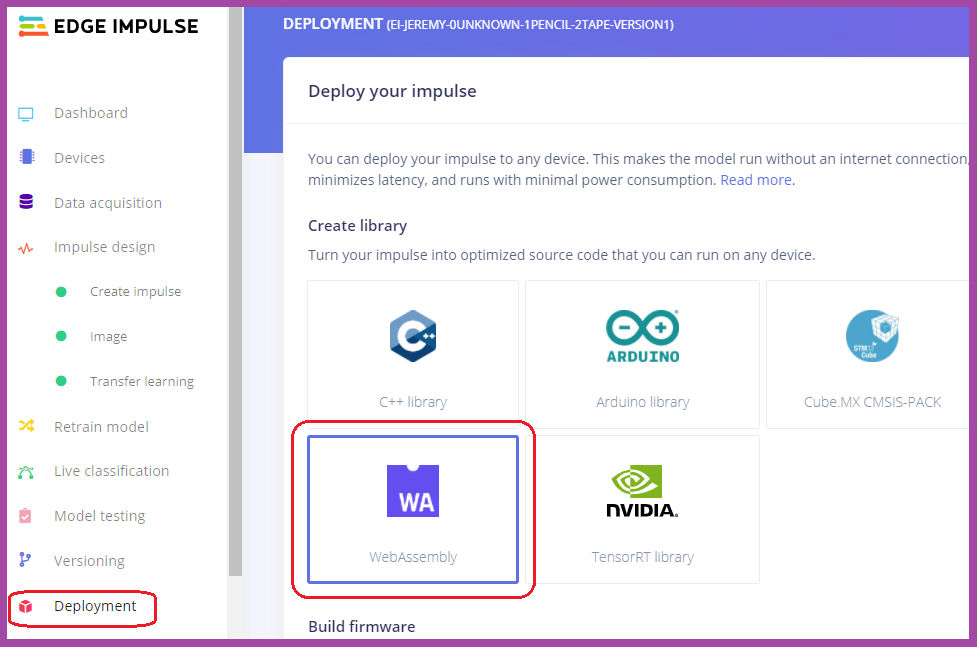

To mark my high school students’ completed Edge Impulse assignments, I have them download a WebAssembly (or WASM) model, unzip the two files called “edge-impulse-standalone.js” and “edge-impulse-standalone.wasm," and then put them in a folder on a (secure) https server. My students use GitHub and setting up a GitPages secure static web server is fairly easy.

I then get the students to upload my “index.html” file found on my Edge Impulse GitHub downloads site here.

The last step is to make an HTTP link to the new folder/index.html file, wait about 60 seconds, and then test your impulse in real-time. A GitPages link looks very similar to a GitHub link, but they are slightly different so be careful.

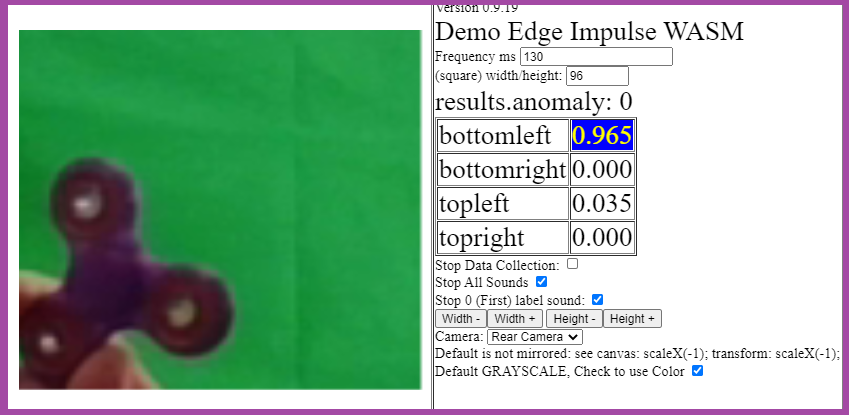

A demo page can be viewed at: https://hpssjellis.github.io/my-examples-of-edge-impulse/public/edge-models/topleft-topright-bottomleft-bottomright/index.html

Note: The index.html file I have auto loads a few drum kit sounds to work with the model. These sounds can be turned off, or you can use your own sounds. This method of seeing your impulse live gives students a much better understanding of how a robot senses the world. The students seem very interested in how fast the model does the analysis as well as how often it switches back and forth between classifications. Many students want to retrain their model after they have witnessed some classifications working much better than others.

I have my students add numbers to their labels to make aligning sounds with labels much easier. I also have them make a “0unknown label." By default the “0unknown” does not make a sound. “1FirstLabel” sound boom.wav audio file, “2SecondLabel” sound kick.wav audio file, “3ThridLabel” sound openhat.wav audio file, etc.

Below, you’ll find a video that I created about putting Edge Impulse onto the browser.

Jeremy Ellis (@rocksetta) is an Edge Impulse ambassador and technology teacher in BC, Canada. He is working on a high school robotics and machine learning curriculum using the Arduino Portenta H7 with the LoRa Vision Shield and lots of other sensors and actuators.

.