Want to use a novel ML architecture, or load your own transfer learning models into Edge Impulse? Create a custom learning block! This feature, which released during Imagine 2022, lets you bring any training pipeline into the Studio — whether written in PyTorch, Keras or scikit-learn — and then treat it like any of our built-in model types. So you can retrain with your own data, and deploy to every MCU or MPU under the sun with full hardware acceleration.

Want to get started? We have end-to-end examples of doing this in Keras, PyTorch and scikit-learn.

Previously we required you to convert your trained model to TensorFlow Lite in the training pipeline; quite a nuisance for any pipeline which did not have built-in support for this (e.g. many PyTorch models). But… not anymore. We now support native ingestion for ONNX files. When your training pipeline outputs ONNX files the Studio now automatically quantizes your model and performs any input order conversion where needed (e.g. NCHW => NHCW).

Ready to get started? Check out our getting started guide, and view our example code repositories for various model architecture implementations:

- YOLOv5 - wraps the Ultralytics YOLOv5 repository (trained with PyTorch) to train a custom transfer learning model.

- EfficientNet - a Keras implementation of transfer learning with EfficientNet B0.

- Keras - a basic multi-layer perceptron in Keras and TensorFlow.

- PyTorch - a basic multi-layer perceptron in PyTorch.

- Scikit-learn - trains a logistic regression model using scikit-learn, then outputs a TFLite file for inferencing using jax.

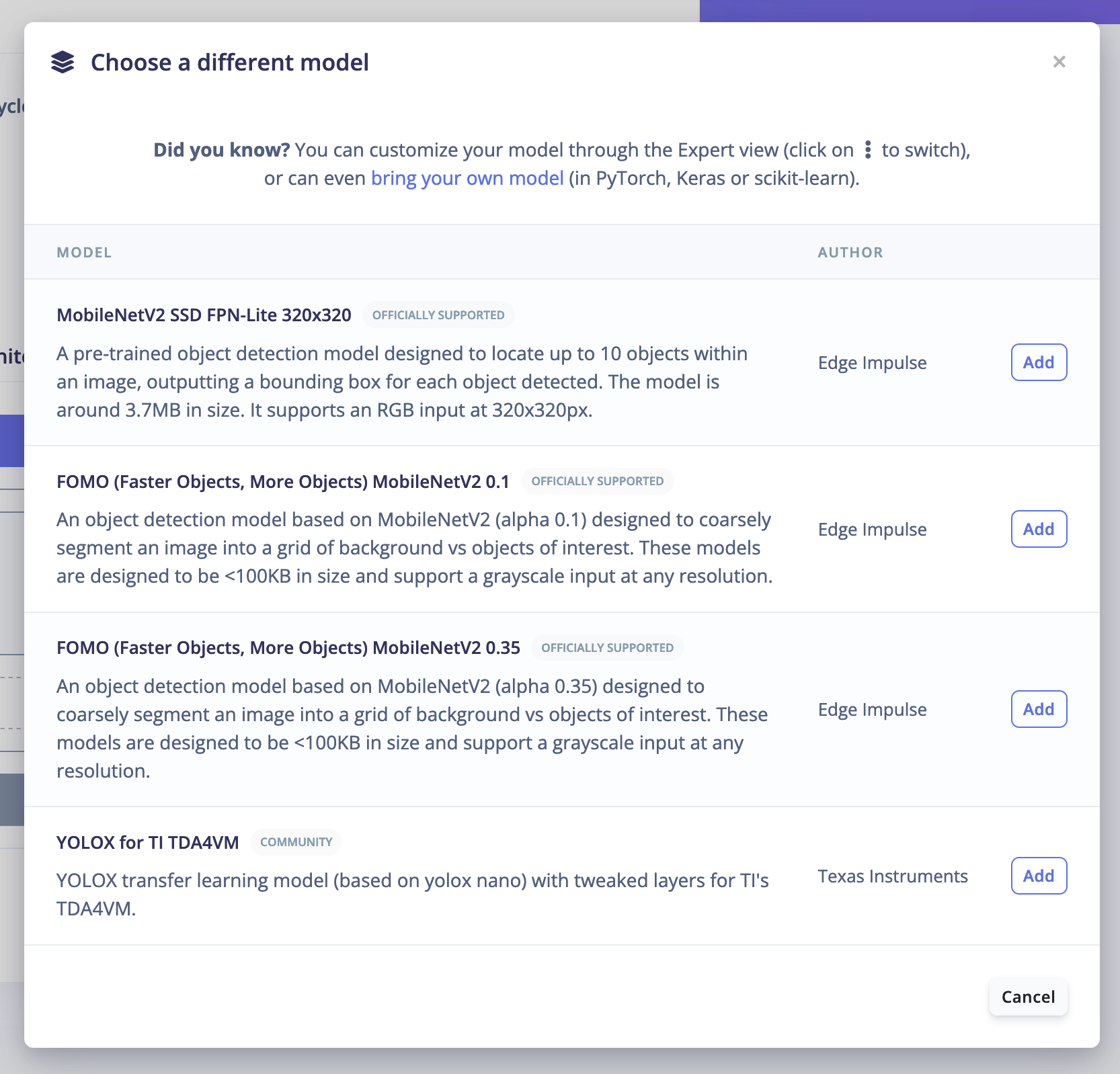

Once you’ve followed the getting started guide and hosted your novel ML architecture in your Edge Impulse account, you’ll be able to select your model from the “Choose a different model” view in your Edge Impulse project:

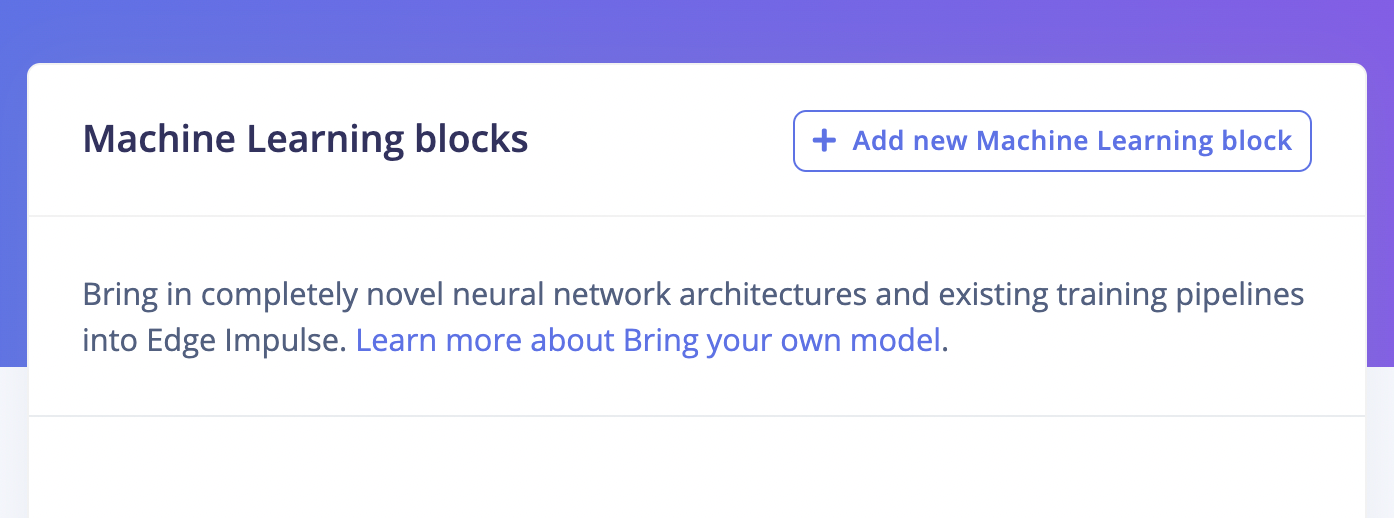

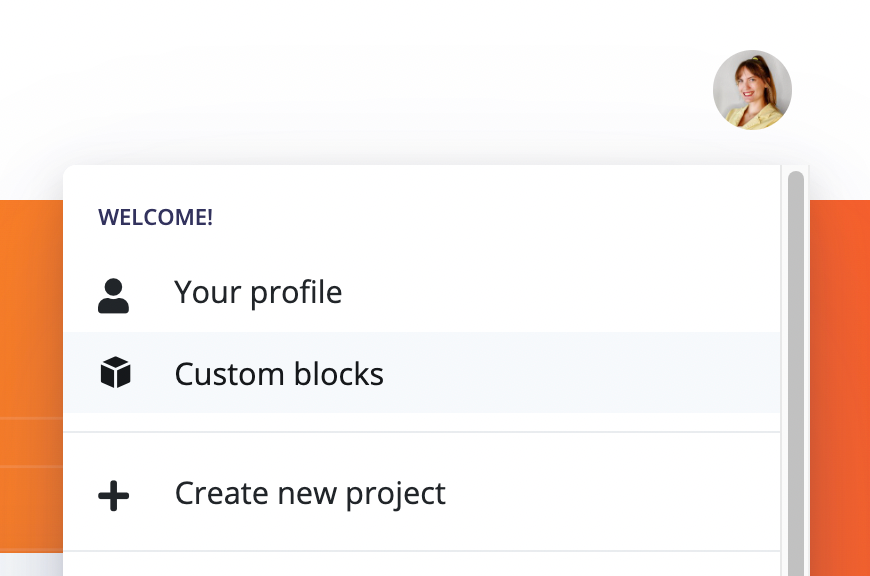

You can also view all of your available custom models in your Edge Impulse account directly through your profile, or by clicking on your account picture: https://studio.edgeimpulse.com/studio/profile/machine-learning-blocks

We are excited to see what custom models you bring into Edge Impulse — share your project with us on social media @Edge Impulse!