One research and development project I have been working on over the last eight months with a longtime friend is an agricultural robot called MARV-bot intended to support crop trials.

Crop trials are intensive operations, as the growth of the crop needs to be recorded accurately along with providing known irrigation levels to help quantify the best growing conditions for the crops.

Crop trials are also often performed in remote locations, which makes for difficulty in access for the trials team. These issues combined make crop trials a costly and intensive operation.

Like many industries, agriculture is seeing a robotic revolution. Agricultural robots are helping farmers pick crop and remove weeds. Dedicated trials robots can not only provide irrigation and spraying, but are able to accurately identify and analyze each of the individual crops as well.

Due to the remote location, agricultural robots need to operate with constrained power budgets. As navigation and communication are critical systems, this limits the power available for the embedded systems deployed on it. The remote locations also means agricultural robots need to leverage modern communication infrastructure. This infrastructure enabled near-real-time telemetry and analysis results to be communicated with the operations center. Depending on the deployment location this could be either terrestrial or satellite 5G. Of course, GNSS is also used to provide an accurate location of the robot and can be used to tag the location of the crops as well.

The trials robot Adiuvo have been supporting is developed by Robotic System Ltd. The robot is designed to straddle a standard trails patch. As such, most of the robot is located above the crops enabling the robot to not only spray the crops but also to image the crops from above. The robot has two modes spraying where it travels at the required rate of 3 MPH and imaging where the robot can image and analyze the crops at a slower speed taking time to ensure accuracy. The currently version one of the robot exits, which can be seen in the video.

The image system is designed to be added onto the trials robot and provide illumination and image capture and processing. Key elements of the imaging and analysis mode is the ability for several parallel embedded systems to identify and image the plants and perform any necessary analysis. The images can then be stored locally and the analyzed data can be sent back over the communications link.

As this trial’s robot development is a prototype currently in development, off-the-shelf development boards are critical to enable the development of the imaging system in a reasonable timescale and risk.

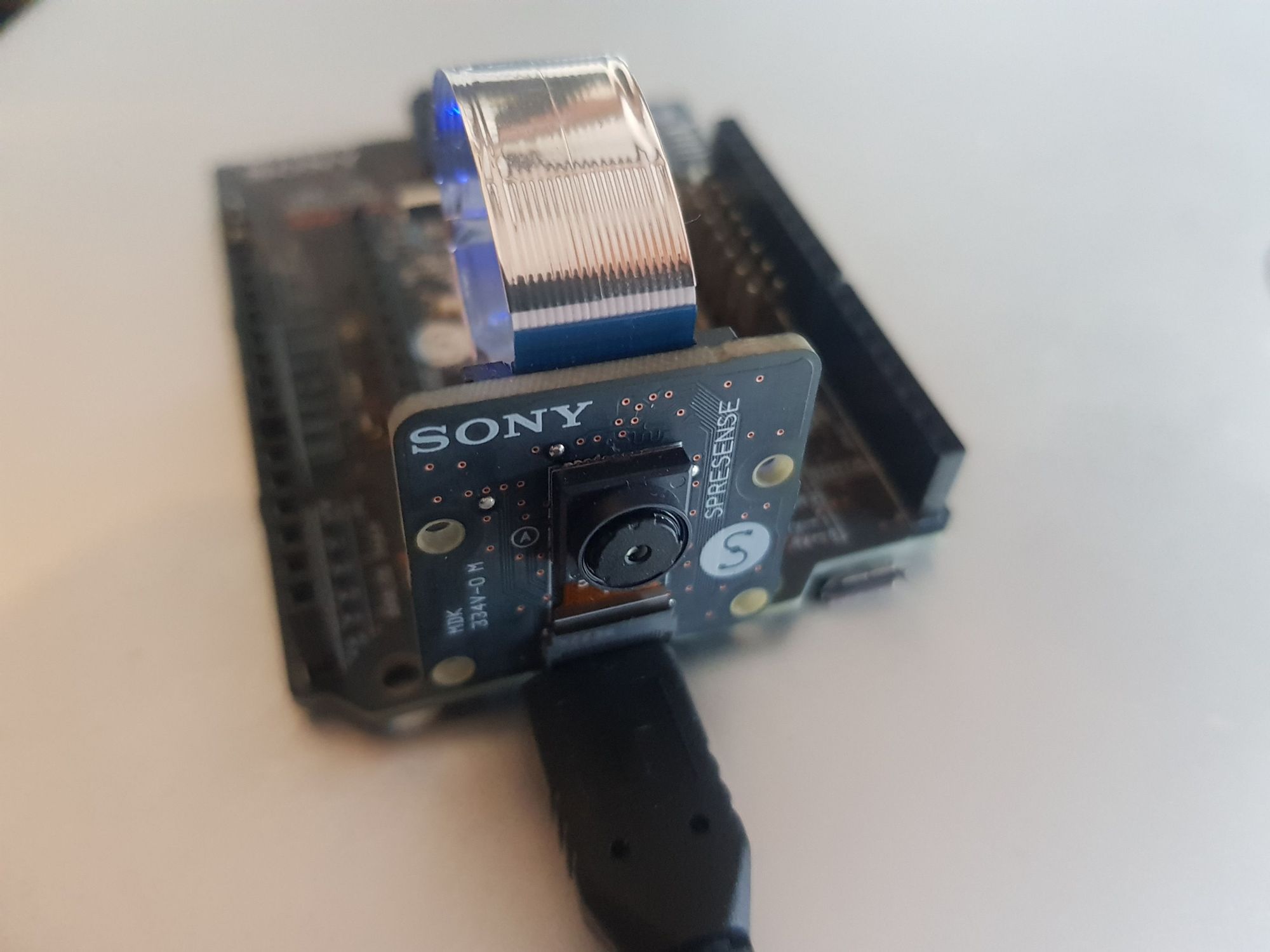

There is a range of boards that I had been considering for this application; however, Edge Impulse recently sent me a Sony Spresense board, which not only has a built-in GPS antenna but is able to connect to a 5MP camera. It’s very compact, which will allow the deployment of multiple systems without challenging the power budget.

Coupled with the recently announced support for the Sony Spresense by Edge Impulse, I thought it would make a good development board to use for initial testing.

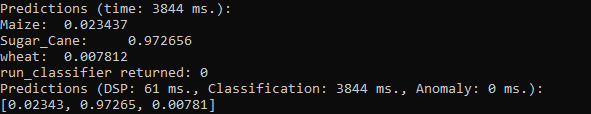

We can use the camera to capture images of the crops, while the GPS can be used to identify the location of the crop in question. Thanks to Edge Impulse, we are also able to run ML algorithms on the Sony Spresense to identify the crop correctly or to detect if there is weed or other unauthorized crop growing.

With that in mind I set out to develop a ML application demonstration for use on the trials robot, which can classify the types of crops.

Getting started with this is straightforward. For this demonstration, I wanted to focus on three different types of crops. For this example, I selected wheat (there are plenty of wheat fields growing by me) maize and sugar can. In the actual deployment, I envisage the ML algorithm being switched out depending on the crop in question, another reason why the ability to easily generate ML algorithms is critical to supporting potential future users.

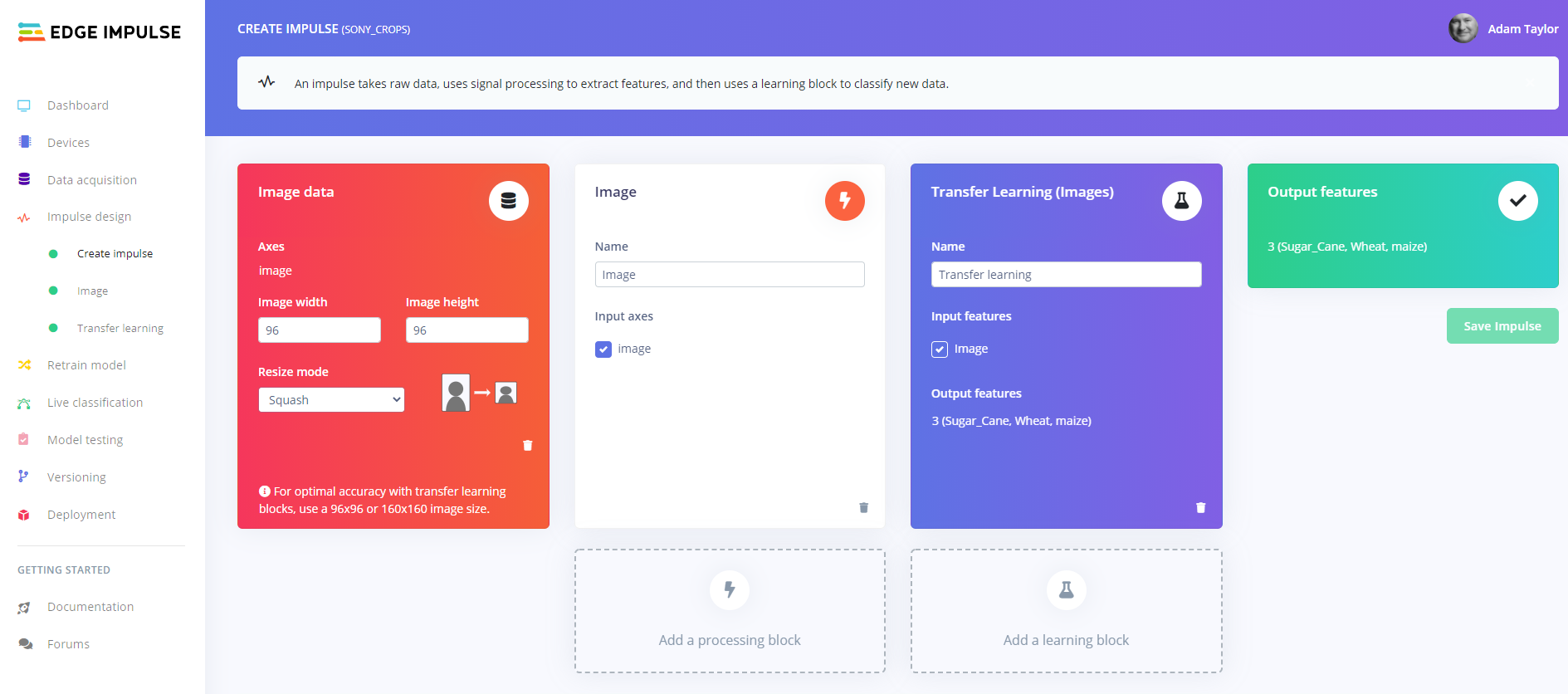

To get started with this I needed a dataset of the different crops, which I obtained from Kaggle. Importing this dataset into Edge Impulse, I was then able to define and train a machine learning solution that can run on the Sony Spresense to classify the crops in question.

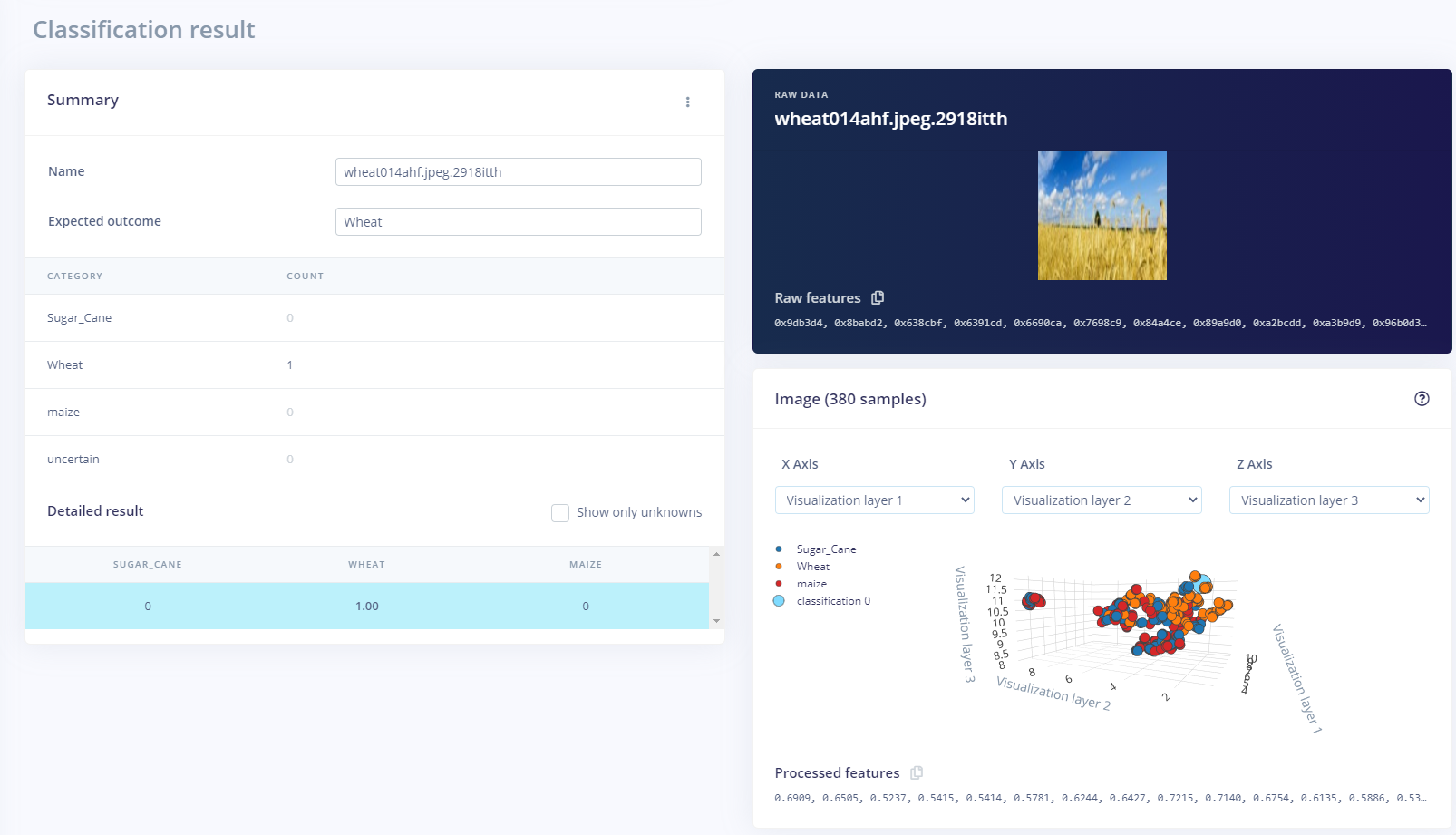

We can test out the algorithm using the test data in the live classification or capture images directly from the Sony Spresense using the Edge Impulse daemon.

What is nice about the Edge Impulse solution is the provision of a C++ library, which can be added to in the development to complete the final algorithm.

We still have a little way to go developing version two of the robot but it is in development.

This project has shown the benefit edge ML can have in a real world application. If you are interested in creating a similar solution, I have written a step-by-step instructions on how to do this. They are available here, while the video below shows a walkthrough of how to create the project using the Edge Impulse Studio.

I am really looking forward to being able to try out the developed algorithm on the robot as we move towards testing in the field!